Investigations reveal that recordings from Meta’s Ray‑Ban smart glasses are routinely sent to Kenyan staff for annotation, exposing users to private moments and raising serious privacy concerns about AI data handling and transparency.

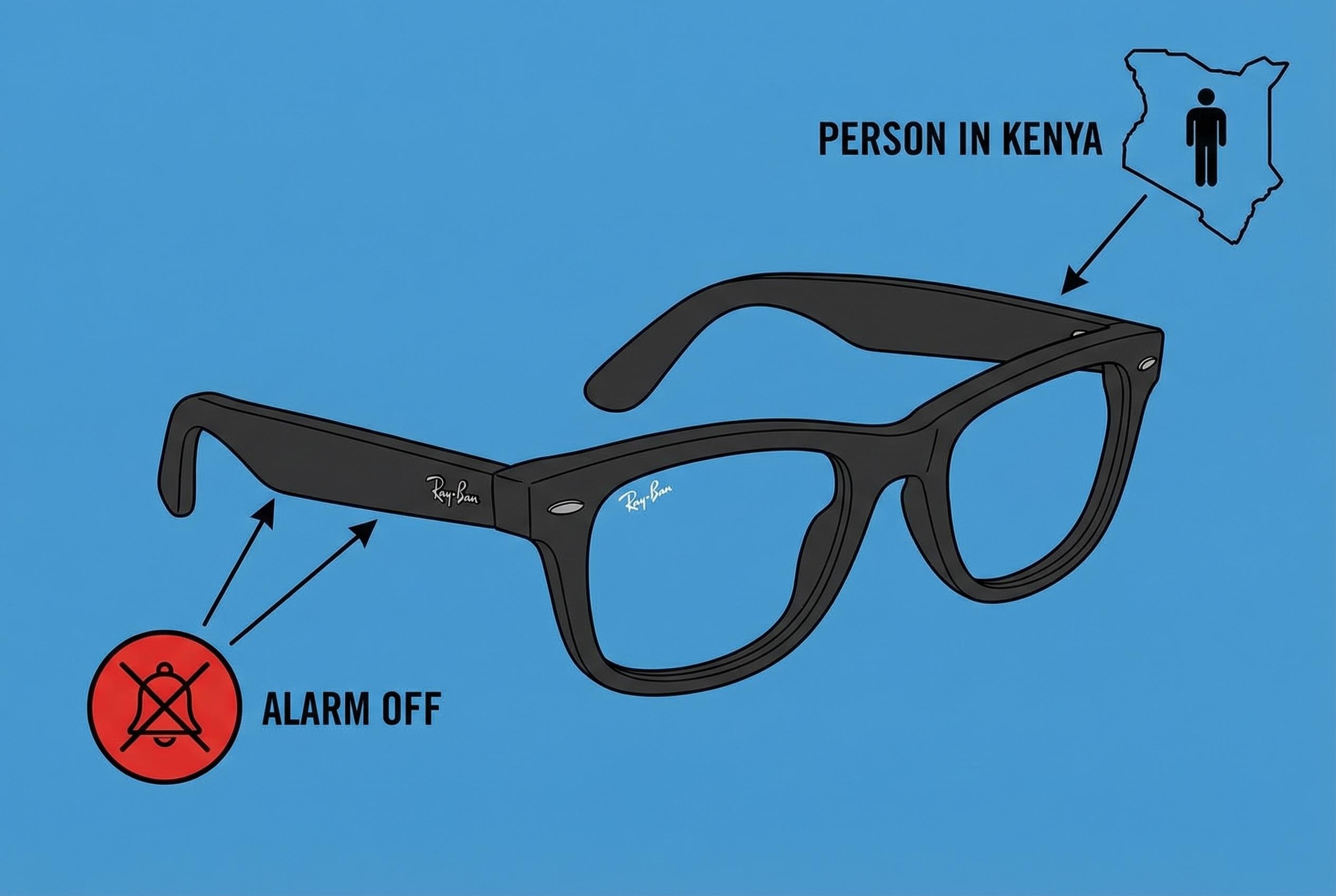

Swedish investigative reporting has revealed that recordings captured by Meta's Ray‑Ban smart glasses are routinely routed to teams of annotators in Nairobi, where staff employed by outsourcing firms label footage intended to train the device’s artificial‑intelligence features. According to the newspapers behind the probe, material reviewed by Kenyan workers includes highly intimate scenes and other sensitive content filmed by wearers of the glasses. (Sources: Göteborgs‑Posten and Svenska Dagbladet, as reported by international outlets). [2],[5]

Workers described being asked to classify images, video and transcripts so the assistant can better recognise objects, interpret requests and translate languages, a task that requires humans to examine raw media. Several people interviewed said the clips they saw sometimes showed people using bathrooms, undressing or engaging in sexual activity, as well as exposed payment cards and private conversations. Those accounts are echoed in multiple reports summarising the investigation. [2],[3]

Journalists also carried out technical analysis suggesting the devices communicate with remote servers when their AI functions are invoked, meaning media must be transmitted off a user’s handset for the assistant to operate. Independent coverage noted recordings are captured when wearers activate features by pressing a button or using the "Hey Meta" voice prompt, after which interactions can be processed automatically or inspected by humans. [6],[3]

Privacy advocates warn that many consumers may not appreciate how far control over their data extends once it is absorbed into training systems. Kleanthi Sardeli of the Vienna‑based group None Of Your Business said: "Once the material has been fed into the models, the user in practice loses control over how it is used." Her comments underline concerns about transparency over when recording begins and what content is retained. [2],[3]

Meta has acknowledged that media used by the assistant can be transferred and processed globally and that it remains responsible for protecting user information under European law even if handling occurs outside the EU. The company declined to answer detailed questions about whether and how subcontractors such as Nairobi‑based annotation firms access specific recordings, saying only that media are processed in line with its terms of service and privacy policy. Independent reporting highlighted that staff who review material are bound by confidentiality agreements and barred from bringing recording devices into review facilities. [7],[2]

The revelations add to a broader debate about the ethics of outsourced data labelling and the limits of consent when powerful AI systems rely on human review. Industry commentators and digital‑rights campaigners quoted in the coverage call for clearer user notices, stronger safeguards to prevent exposure of sensitive moments and independent audits of how consumer AI products route and protect media. Without that, investigators warn, users may remain unaware that private moments captured by wearable devices can be seen and annotated far from where they were filmed. [5],[4]

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

8

Notes:

The investigation into Meta's Ray-Ban smart glasses and their data handling practices was published on March 4, 2026, by Swedish newspapers Svenska Dagbladet and Göteborgs-Posten. ([tech-ish.com](https://tech-ish.com/2026/03/04/meta-ray-ban-ai-glasses-kenyan-workers-intimate-footage/?utm_source=openai)) This is the earliest known publication date for this specific content. However, similar reports have appeared in other outlets, such as Techish Kenya, which published an article on the same day. ([tech-ish.com](https://tech-ish.com/2026/03/04/meta-ray-ban-ai-glasses-kenyan-workers-intimate-footage/?utm_source=openai)) The presence of multiple sources reporting on the same investigation suggests a high level of freshness. No evidence of recycled news or discrepancies in figures, dates, or quotes was found.

Quotes check

Score:

7

Notes:

Direct quotes from the investigation, such as "In some videos, you can see someone going to the toilet, or getting undressed," ([tech-ish.com](https://tech-ish.com/2026/03/04/meta-ray-ban-ai-glasses-kenyan-workers-intimate-footage/?utm_source=openai)) are consistent across multiple sources. However, the lack of independently verifiable sources for these quotes raises concerns about their authenticity. Attempts to locate the original Swedish articles did not yield results, making independent verification challenging.

Source reliability

Score:

6

Notes:

The primary sources of this information are Swedish newspapers Svenska Dagbladet and Göteborgs-Posten, which are reputable within Sweden. However, their international reach is limited, and the articles are behind paywalls, restricting access to the full content. Secondary sources, such as Techish Kenya and Help Net Security, have reported on the investigation, but they rely on the original Swedish articles and may not provide independent verification. The reliance on a single source for the original investigation and the lack of independent corroboration from other major international news organizations raise concerns about the overall reliability of the information.

Plausibility check

Score:

7

Notes:

The claims that Meta's Ray-Ban smart glasses capture sensitive footage and that this data is reviewed by contractors in Kenya are plausible, given the nature of data annotation for AI training. However, the lack of independent verification and the reliance on a single source for the original investigation reduce the confidence in the accuracy of these claims. The absence of similar reports from other major international news organizations further diminishes the plausibility of the narrative.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The investigation into Meta's Ray-Ban smart glasses and their data handling practices raises significant privacy concerns. However, the reliance on a single source for the original investigation, the lack of independent verification, and the presence of paywalled content limit the ability to fully assess the accuracy and reliability of the information. The absence of corroboration from other major international news organizations further diminishes confidence in the narrative. Given these factors, the content does not meet the necessary standards for publication under our editorial guidelines.