Artificial intelligence is no longer an abstract promise; it now influences everyday decisions from credit approval to fraud detection and customer service. Yet the people shaping these systems matter as much as the code: teams lacking varied life experiences risk embedding and amplifying existing social biases into the models they build. According to reporting and industry commentary, correcting that imbalance requires deliberate attention to who is designing, testing and deploying AI. (Sources: industry commentary).

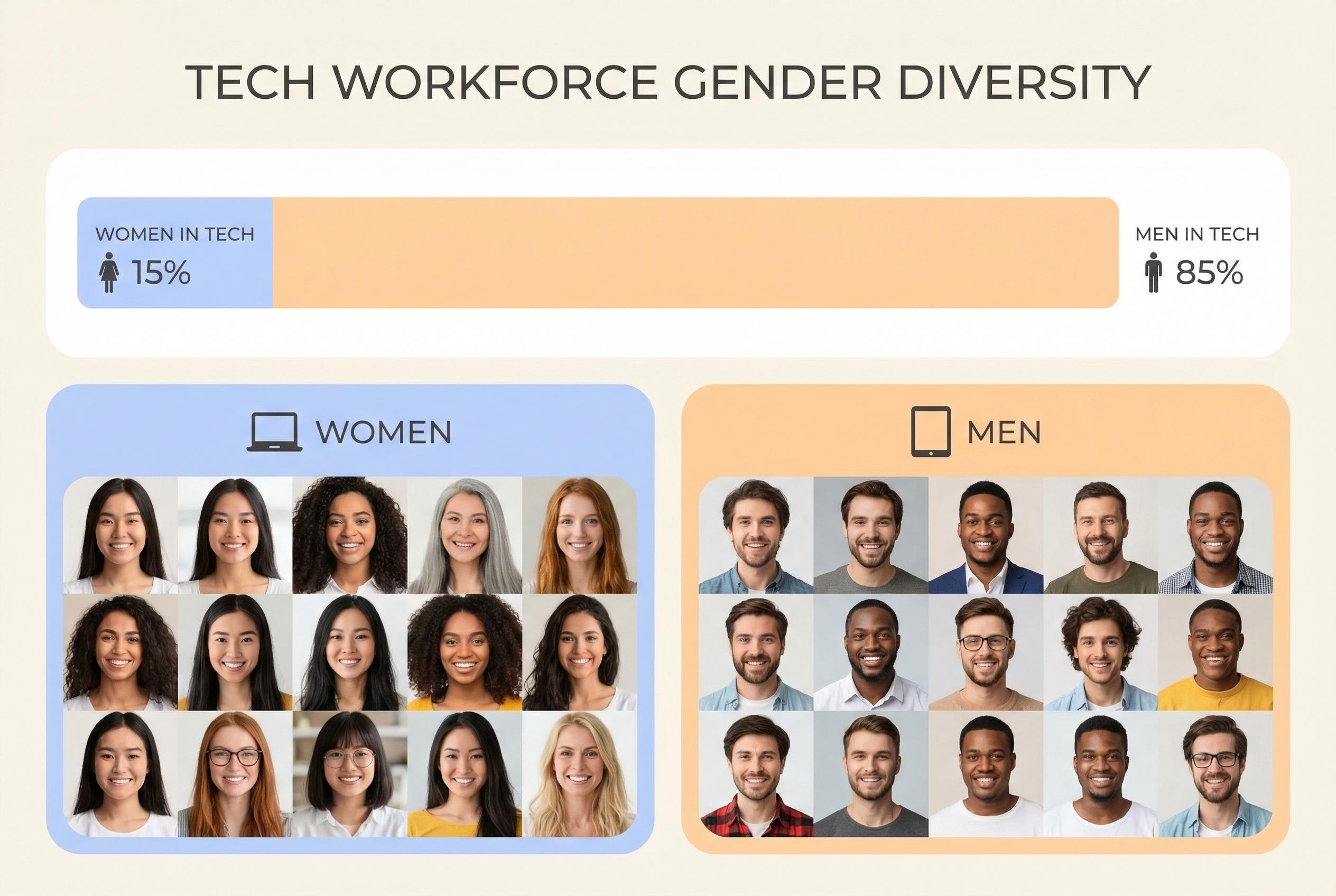

The technology and payments sectors continue to show a substantial gender gap that starts long before careers begin. Educational choices, cultural assumptions about computing and recruitment practices all contribute to a smaller pipeline of women entering technical roles. Experts argue that interventions in schools, visible role models and outreach will help expand that pipeline, while employers must scrutinise hiring and consider bold targets such as equal graduate intake to create a workforce that mirrors the populations AI will affect. According to ITWeb and workplace analysis, both early outreach and corporate recruitment reform are essential.

Beyond representation, gender diversity changes how problems are framed and solved. Diverse teams are more likely to identify dataset blind spots, question assumptions embedded in historical records and stress-test algorithms for disparate impacts. Research and industry commentary show that mixed-gender teams tend to be stronger at creative problem solving and innovation, which in turn reduces operational and reputational risk when systems are rolled out. SHRM and sector analyses point to improved outcomes when teams reflect a range of perspectives.

Women are increasingly visible in forums that set ethical AI agendas, helping to steer debates on fairness, transparency and explainability. Organisations and advocacy groups led by women are pushing ethical concerns from discussion into practice, arguing that ethics must be embedded at the design stage rather than treated as an afterthought. Observers say institutionalising these values across product development, procurement and board-level decision-making will be crucial to preventing bias from becoming encoded in deployed systems. Commentary from WomenTech and sector groups underscores this shift from principle to implementation.

The skills women commonly bring to collaborative work, such as high levels of emotional intelligence and an orientation toward empathy, have practical value when AI governs choices that affect people's lives. These so-called soft skills help teams anticipate unintended consequences and assess whether automated outcomes feel equitable across different communities. Academic and NGO research warns that excluding these perspectives not only undermines fairness but can also perpetuate gendered harms, making continuous monitoring and clear ethical standards essential.

Achieving responsible, trusted AI therefore demands both cultural and structural change: broaden the talent pipeline, set measurable diversity goals, embed ethics in engineering workflows and hold organisations accountable for outcomes. Industry commentators stress that diversity is not merely symbolic but a practical governance mechanism that improves robustness, equity and public trust in AI-enabled services. As payments and technology firms accelerate AI adoption, making gender inclusion a strategic priority will determine whether these systems serve everyone or entrench existing inequities.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2]

- Paragraph 2: [2],[5]

- Paragraph 3: [6],[2]

- Paragraph 4: [3],[4]

- Paragraph 5: [4],[7]

- Paragraph 6: [2],[6]

Source: Noah Wire Services