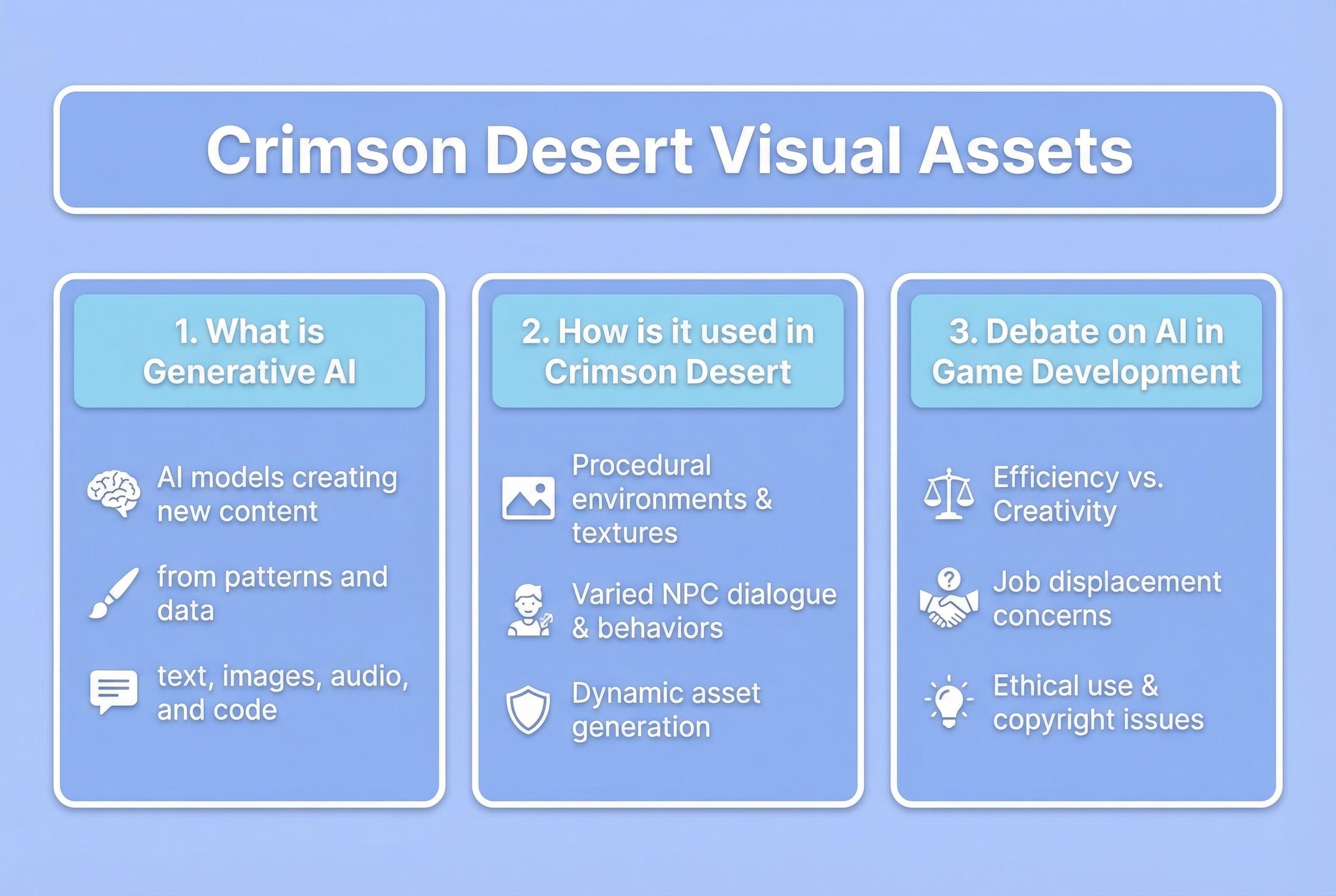

Crimson Desert's launch drew millions of players into its world amid mixed critical reaction, and within hours users were flagging several in-game paintings and signs as appearing to bear the hallmarks of generative artificial intelligence rather than human handiwork. IGN reported multiple forum threads and social posts showing odd anatomy and repeating visual artefacts in decorative assets found around the game's setting, Pywel, prompting players to ask whether finalised art had been produced or assisted by AI. According to IGN, some community images, particularly paintings of horses and clustered figures, exhibit distortions that players argue are unlikely to be the result of conventional human error. Industry reporting and player screenshots have amplified those concerns. (Sources: TechRadar; Wikipedia: Artificial intelligence controversies).

Pearl Abyss has sought to reassure parts of its audience about voice acting, with marketing director Will Powers telling media that main and quest NPCs are voiced by human actors in English, Korean and Chinese at launch, though he stopped short of an absolute guarantee that every single line across the vast game contains no synthetic contribution. That public statement has helped draw a line between the studio's declared use of human talent for voices and the separate, emerging questions around visual assets. (Source: TechRadar).

The debate taps into a broader, unresolved set of issues about authorship, disclosure and platform policies. Game storefronts such as Steam require publishers to disclose the use of generative AI for content, and failure to do so can run afoul of those rules; players and commentators have pointed out that Crimson Desert's store page contained no such disclosure at the time the allegations surfaced. Past controversies in the sector, from billboard-style accusations in high-profile racing and shooter franchises to an indie title losing an award after an AI-derived piece reached a final build, have shown how reputational backlash can emerge even when sales remain unaffected. (Sources: Wikipedia: Artificial intelligence controversies; Ars Technica).

Legal and ethical questions about creative ownership further complicate the conversation. Government and institutional decisions have already indicated that fully AI-generated works may not meet traditional standards of human authorship for copyright protection, a ruling that has reverberated across art and publishing communities. That precedent feeds into concerns over whether studios using generative tools should disclose them, how credits should be assigned, and what recourse exists for the workforce whose skills may be affected. (Source: Ars Technica).

For players and observers, the core issue is verification. Community sleuthing can highlight anomalies in textures and compositions, but determining whether assets were produced, edited or merely inspired by AI requires confirmation from the developer or an independent audit of source files. IGN reported that they had contacted Pearl Abyss seeking clarification; until the studio issues a clear statement about its asset pipeline, the debate will rest on player interpretation of in-game artefacts and the evolving norms around disclosure. (Sources: IGN reporting as described; TechRadar).

The episode illustrates a turning point for the games industry as it negotiates the integration of generative tools into creative pipelines. If studios employ AI for concepting, placeholders or final art, transparent disclosure and updated platform policies appear likely to become central to how companies manage community trust. Meanwhile, the conversation is already refocusing on whether existing rules and cultural expectations adequately address the rapid emergence of generative techniques across both visual and audio production. (Sources: Wikipedia: AI slop; Wikipedia: Artificial intelligence controversies).

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [6]

- Paragraph 2: [2]

- Paragraph 3: [6], [7]

- Paragraph 4: [4]

- Paragraph 5: [2]

- Paragraph 6: [6], [7]

Source: Noah Wire Services