Model and actress Savannah Adwoa Mensah says she first realised how vulnerable her online identity had become when she spotted a flawless, unfamiliar image of herself used to sell a herbal skincare product on Facebook. She publicly warned followers: "If you see an ad of me promoting this product, it’s not me. It’s an AI-generated image used without my consent." According to local reporting, her experience is far from isolated as synthetic likenesses proliferate across Ghanaian social media.

The misuse stretches beyond images. Journalists and broadcasters report cloned voices and fabricated endorsements being deployed to market dubious medical remedies and commercial products, sometimes without any identifiable company behind them. Industry analysts and international reporting have linked such scams to organised fraud rings that exploit generative technology to scale deception rapidly.

High-profile incidents elsewhere in the region underline how quickly false material can spread. Broadcasters in South Africa were impersonated in realistic videos that promoted investment scams and drew hundreds of thousands of views before platforms intervened, demonstrating the speed and reach of synthetic media when coupled with social networks.

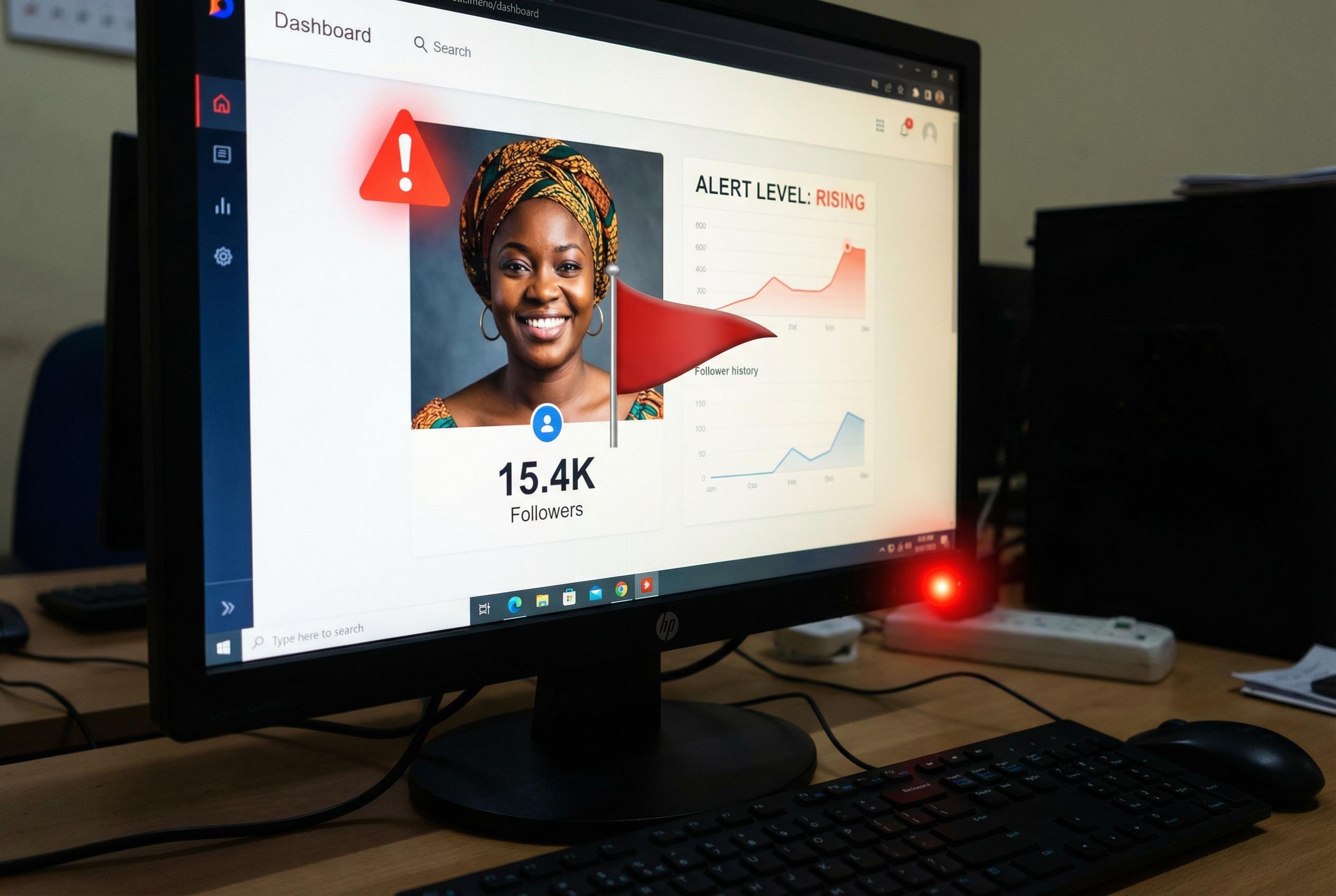

Data firms and verification specialists have sounded the alarm about a sharp uptick in deepfake-enabled fraud. A recent industry analysis found a multi-fold rise in cases linked to synthetic identities in late 2024, warning that these techniques have moved from niche experiments to tools that cause measurable financial and reputational harm.

Ghana’s existing laws offer routes for redress but have yet to be tested thoroughly against the novel mechanics of AI impersonation. Legal practitioners note that unauthorised use of a person’s image or voice may engage data-protection provisions and constitutional privacy guarantees, but they caution that courts have limited precedent for assigning liability in cases where synthetic media are generated and distributed by opaque actors.

Enforcement faces practical hurdles. Investigators and prosecutors contend with scant forensic capacity to trace the provenance of synthetic content, challenges in preserving admissible digital evidence and jurisdictional obstacles when campaigns originate overseas. Observers say those gaps make quick takedowns and prosecutions difficult, even when the harms are clear.

Alongside legal responses, media literacy advocates emphasise prevention. Trainers and communications scholars have urged the public to develop verification habits ahead of elections and other high-stakes moments, offering practical checks to distinguish manipulated media and reduce the likelihood of viral amplification.

Security analysts warn the political implications are acute: AI-crafted audio or video can be tailored to sway voters, smear opponents or trigger financial consquences, particularly around election cycles. Commentators advise a mix of platform responsibility, stronger verification systems and public awareness campaigns to shore up trust in digital information flows.

For those targeted, the consequences are immediate and personal. Senior journalist Maame Esi Nyamekye Thompson responded online to a counterfeit diabetes advert bearing her likeness: "This is still ongoing. I never did this advert lol." Her reaction captures the indignity and confusion victims face as they try to disentangle their reputations from synthetic falsehoods while regulators, platforms and civil society scramble to catch up.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [7], [2]

- Paragraph 2: [7], [5]

- Paragraph 3: [5], [7]

- Paragraph 4: [5], [7]

- Paragraph 5: [4], [6]

- Paragraph 6: [6], [7]

- Paragraph 7: [3], [6]

- Paragraph 8: [2], [3]

- Paragraph 9: [7], [3]

Source: Noah Wire Services