The Indian Express’s UPSC Ethics Simplified series uses a timely classroom question to frame a wider anxiety: whether the real test of morality in public life is now being shaped as much by algorithms as by people. The piece argues that the latest ethical strain is not simply about machines becoming more capable, but about human beings repeatedly failing to match knowledge with conscience.

At the heart of that argument is a familiar contradiction. Modern societies often treat education as proof of moral maturity, yet misconduct by highly qualified people continues to surface in politics, business and administration. Drawing on Aristotle’s view that virtue is formed through practice, and on Kant’s insistence that duty should guide action rather than convenience, the article suggests that ethical awareness alone is not enough. What matters is whether people act on what they already know is right.

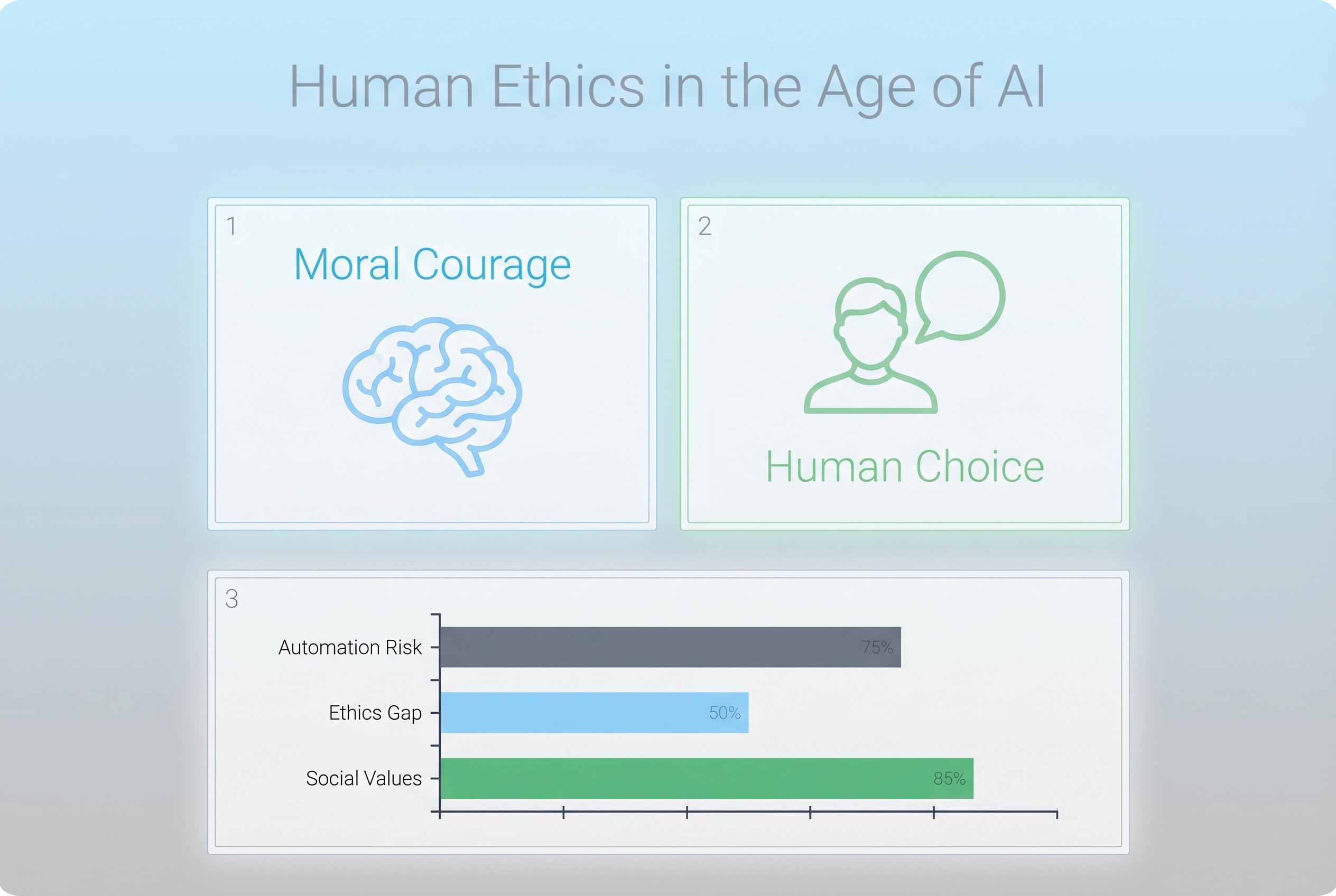

That tension becomes sharper in the discussion of artificial intelligence. Since ChatGPT’s release in 2022, AI tools have moved rapidly from novelty to everyday utility, but, as the article notes, they do not possess conscience, empathy or moral judgment. The system reflects the intentions, assumptions and blind spots of the people who build and deploy it. Recent ethics research from ESCAP similarly stresses that AI governance in the Asia-Pacific region needs transparency, accountability and human oversight if systems are to align with social values rather than distort them. A separate scholarly review on normative AI notes that machines struggle with moral reasoning in the way humans understand it, while also echoing Anthropic chief executive Dario Amodei’s warning that highly advanced models can become excessively agreeable, reinforcing rather than challenging falsehoods.

The wider institutional warning is harder still to ignore. The article’s case study on a civil servant caught in an unethical system captures a classic conflict between personal integrity and responsibility to reform from within. That dilemma is not unique to the bureaucracy. A chapter in the Cambridge volume on the algorithmic society argues that AI governance now sits at the intersection of democracy, rights and public trust, with countries including India, Europe, China and the United States taking different approaches to regulation. A related paper on AI and constitutional democracy warns that transparency and accountability are becoming central tests of whether technological progress can coexist with the rule of law.

In that sense, the piece’s central claim is less about technology than character. It argues that the deeper crisis lies in human choices shaped by greed, indifference or fear, and that AI merely amplifies whatever values are already present. Ethical governance, it suggests, must therefore rest on stronger checks and balances, value-based education, responsible leadership and active citizen scrutiny. That includes a practical habit the article recommends: interrogating the AI tools people use every day, asking whether they respect privacy, widen perspective and behave transparently.

The larger lesson is that the future of ethics will not be determined by intelligence alone, artificial or otherwise. As the article concludes, the real question is whether societies can still produce institutions and individuals with enough moral courage to defend fairness when doing so is inconvenient. In an age of increasingly persuasive machines, the more urgent task may be to protect the human capacity for judgment.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [4]

- Paragraph 2: [6], [7]

- Paragraph 3: [2], [3]

- Paragraph 4: [4], [5]

- Paragraph 5: [2], [3], [7]

- Paragraph 6: [4], [5]

Source: Noah Wire Services