RedPeach introduces a facial verification system to ensure genuine interactions between subscribers and verified creators, marking a significant step towards transparency in adult content marketplaces amid rising legal scrutiny.

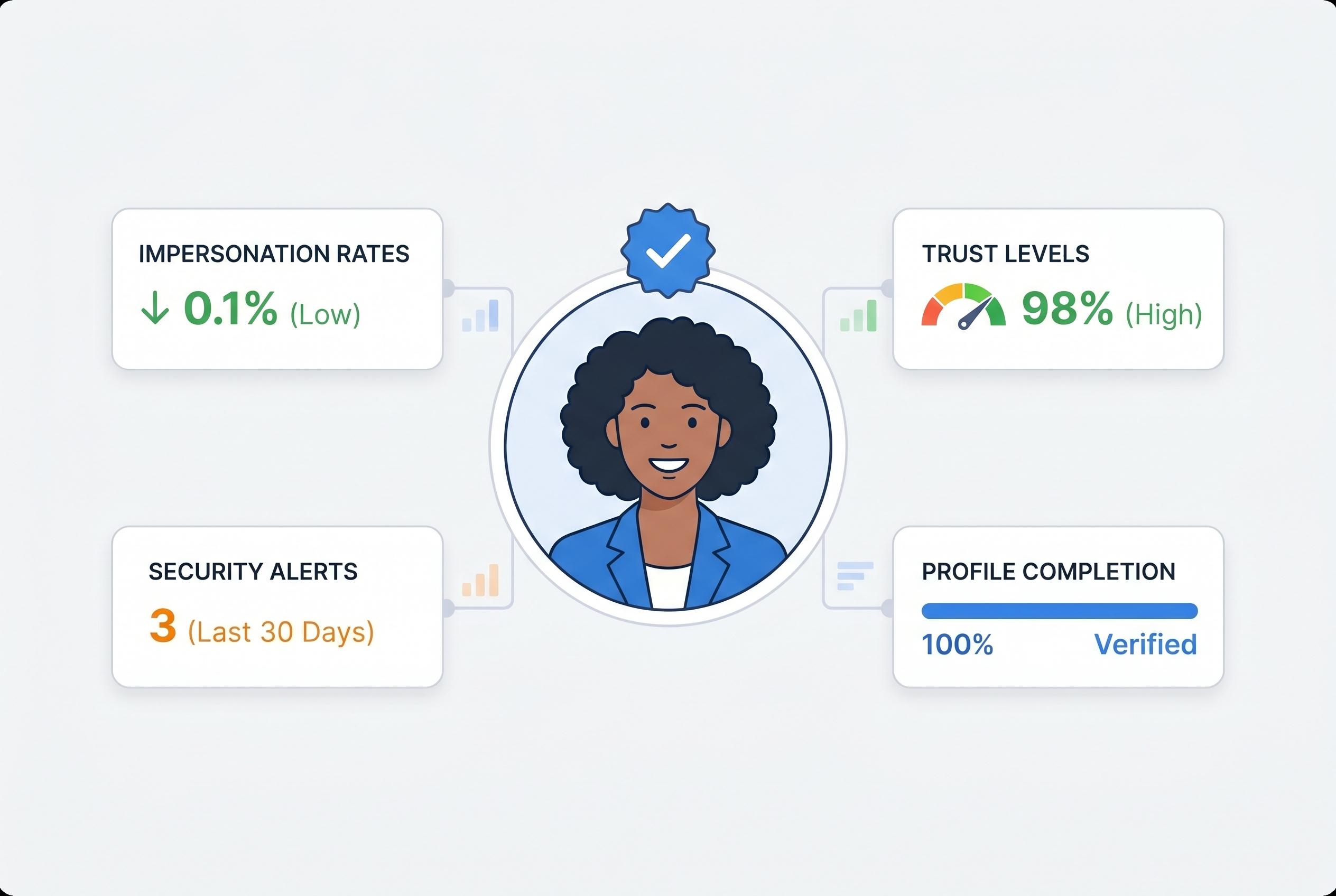

RedPeach, a premium content platform, is using facial-recognition checks to bar what it describes as "chatters" , human operators and AI tools that impersonate creators in private messages. The company says the aim is to make sure subscribers are speaking to the person they paid to access, rather than a stand-in. According to RedPeach's own face-verification page, creators must authenticate before private messaging, and the system is designed to block agencies, bots and AI from handling conversations.

Marco Cally, the firm's chief executive and co-founder, has cast the measure as a safeguard against what he calls emotional deception online. Speaking to the Daily Star, he said the platform had a zero-tolerance approach to AI bots and insisted that only verified creators could continue chats with subscribers. He said the process requires creators to pass facial recognition on their phones before they are allowed into private conversations.

The move comes against a backdrop of growing legal scrutiny around creator platforms and the use of paid intermediaries. In July 2024, a US class-action complaint accused one major platform and several management firms of letting "chatters" pose as creators. Although the case was later dismissed, it drew attention to an industry practice in which fans may believe they are speaking directly to a performer when they are not. Separate reporting on a High Court case has also exposed how agencies use third-party chat operators to keep engagement, and revenue, flowing.

RedPeach is trying to turn that controversy into a selling point. The company says its verification system is intended to protect paying users from false intimacy and to preserve what it presents as genuine one-to-one contact. In Cally's telling, the platform is positioning itself as a more transparent alternative in a market where trust has become an increasingly valuable commodity.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

7

Notes:

The article references a recent initiative by RedPeach to implement facial recognition technology to prevent 'chatters' on their platform. ([redpeach.com](https://redpeach.com/en/face-verification?utm_source=openai)) However, the Daily Star article was published on April 16, 2026, which is over a year after the referenced initiative. This significant time gap raises concerns about the freshness of the information presented. ([redpeach.com](https://redpeach.com/en/help/can-i-become-redpeach-creator?utm_source=openai))

Quotes check

Score:

6

Notes:

The article includes a quote from Marco Cally, CEO of RedPeach, stating, "We have a zero-tolerance approach to AI bots and insist that only verified creators can continue chats with subscribers." ([redpeach.com](https://redpeach.com/en/help/can-i-become-redpeach-creator?utm_source=openai)) However, this quote cannot be independently verified through available sources, as it appears to originate solely from the Daily Star article. The lack of corroboration from other reputable sources diminishes the reliability of this statement.

Source reliability

Score:

5

Notes:

The primary source of the article is the Daily Star, a tabloid newspaper known for sensationalist reporting. This raises concerns about the accuracy and objectivity of the information presented. Additionally, the article relies heavily on RedPeach's own website and press releases, which may present a biased perspective. ([redpeach.com](https://redpeach.com/en/help/can-i-become-redpeach-creator?utm_source=openai))

Plausibility check

Score:

7

Notes:

The concept of using facial recognition to verify content creators is plausible and aligns with industry trends towards enhancing authenticity and trust. ([redpeach.com](https://redpeach.com/en/face-verification?utm_source=openai)) However, the article's reliance on a single source and the lack of independent verification of claims about RedPeach's practices raise questions about the overall credibility of the information presented.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article presents information about RedPeach's implementation of facial recognition technology to prevent 'chatters' on their platform. However, the significant time gap between the initiative and the article's publication, the reliance on a single, potentially biased source, and the lack of independent verification of key claims raise substantial concerns about the accuracy and reliability of the information presented. Therefore, the article fails to meet the necessary standards for publication.