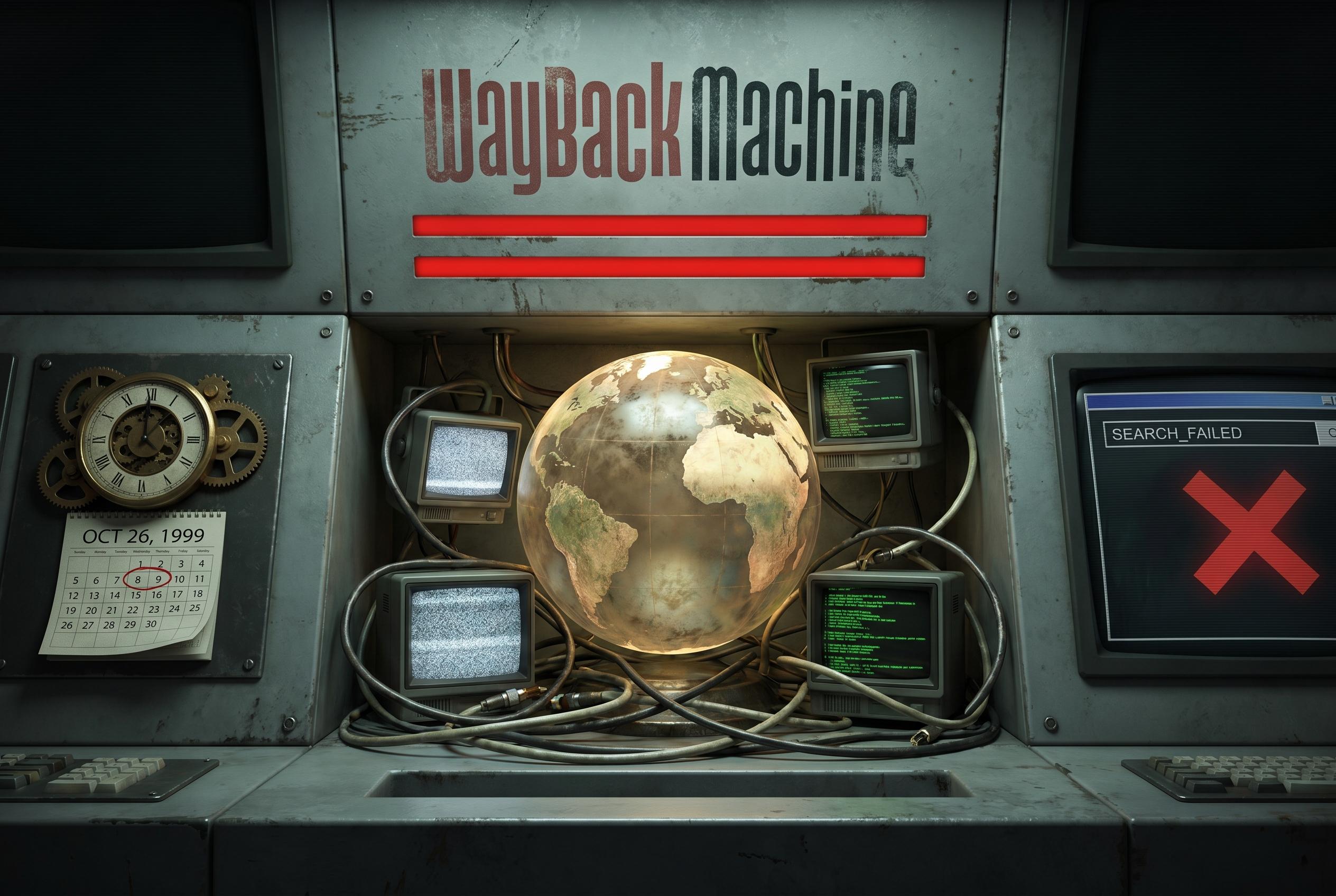

The Internet Archive's Wayback Machine has long functioned as the web’s institutional memory, preserving pages that might otherwise disappear through deletion, redesign or pressure from powerful interests. In the Philippines, the archive helped сохранить material linked to the controversy over articles about Senator Tito Sotto and Pepsi Paloma after those pages were taken down, underlining how easily the online record can be altered once a publisher removes content.

That role is now being strained by an argument that has nothing to do with old links and everything to do with artificial intelligence. According to reporting by WIRED, a growing number of major news organisations are blocking the Internet Archive’s crawler because they fear archived material is being used by AI companies to train models without permission. The New York Times has said the issue is that its archived content is being used in ways that violate copyright law and compete with its business.

The scale of the shift is significant. Early this year, Nieman Lab found that 241 outlets across nine countries were restricting at least one of the archive’s bots, while other reports said the blockage now includes major publishers such as USA Today Co., The New York Times, Reddit and, in a more limited form, The Guardian. By early 2026, the Wayback Machine had passed one trillion archived pages, according to reporting cited by Rappler, but that expanding library is increasingly being fenced off just as demand for historical web records remains strong.

The contradiction is sharpest where publishers themselves rely on the archive. USA Today has used the Wayback Machine in reporting on changes to US Immigration and Customs Enforcement detention statistics, even as its parent company blocks the archive from preserving its own pages. Mark Graham, who leads the Wayback Machine, told WIRED that publishers can benefit from the archive’s records while limiting access to them, describing the broader standoff with AI firms as collateral damage.

There is, however, a real reason publishers are nervous. A Washington Post analysis found that Internet Archive data has appeared in major training datasets, and that has reinforced fears that publicly archived pages can be repurposed downstream for commercial AI systems. Industry observers say the problem is not simply technical but economic: as search summaries and chatbots reshape how audiences reach news, publishers are looking for ways to stop their content being reused without compensation.

Against that backdrop, digital-rights groups are urging newsrooms not to punish the archive for disputes it did not create. Fight for the Future, the Electronic Frontier Foundation and Public Knowledge backed an open letter praising the Internet Archive’s role in preserving the public record, saying it has helped keep citations alive on millions of Wikipedia entries and does not engage in paywall circumvention or irresponsible scraping. The EFF warned that if major publishers keep locking the archive out, large parts of the historical record may simply vanish, even as courts continue to sort out the separate legal fight over AI training.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [3]

- Paragraph 2: [4], [5]

- Paragraph 3: [6], [7]

- Paragraph 4: [2], [6]

- Paragraph 5: [4], [5]

- Paragraph 6: [1], [6]

Source: Noah Wire Services