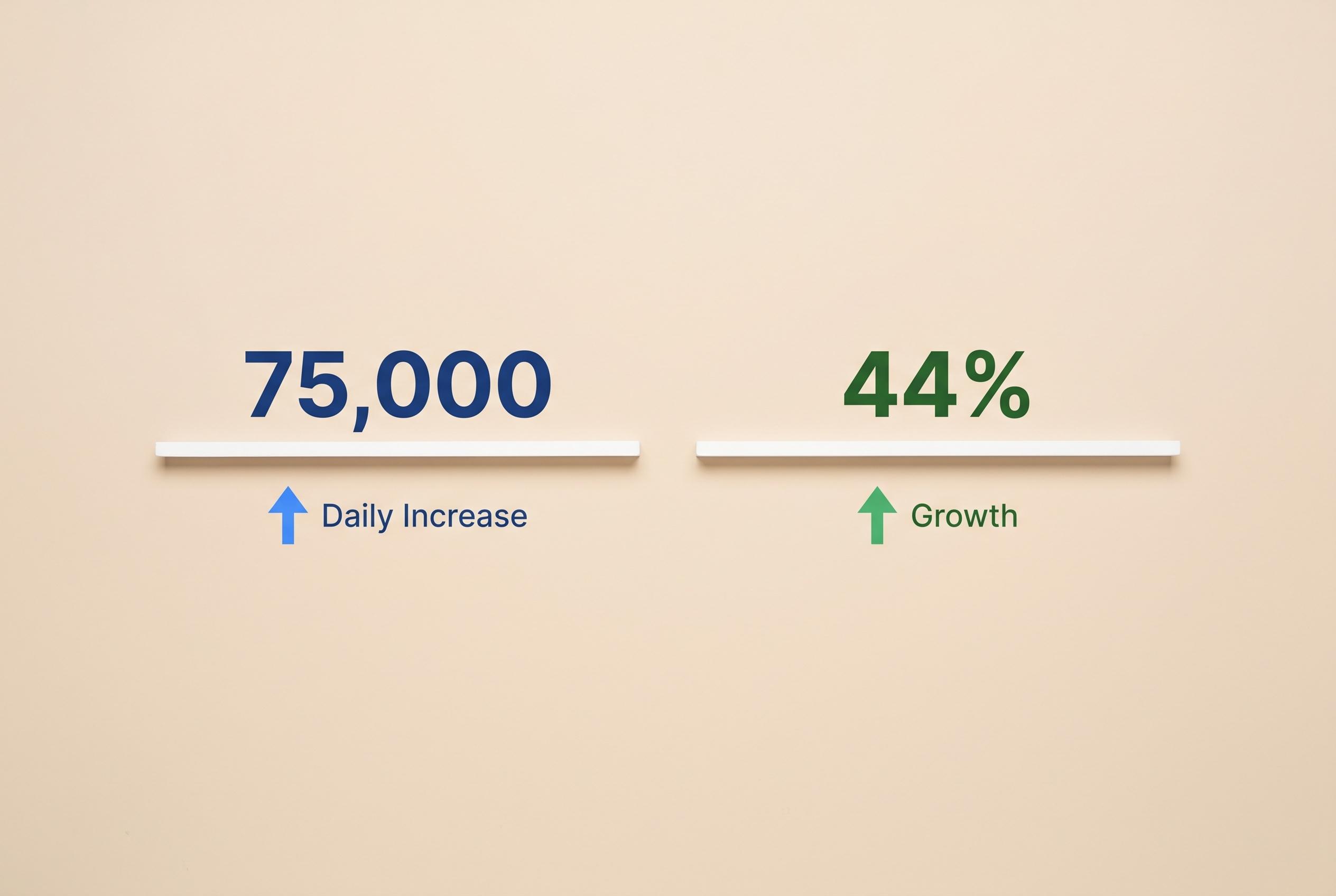

Deezer says artificial intelligence is now reshaping the music platform at industrial scale, with nearly 75,000 fully AI-generated tracks uploaded each day, or 44% of all new music delivered to the service. The company says the surge has turned what once looked like a novelty into a problem of fraud, transparency and artist pay.

The figures also show how fast the trend has accelerated. Deezer said its detection system identified about 10,000 AI-made tracks a day when it launched in January 2025, rising to roughly 20,000 in April, more than 30,000 by September and around 60,000 by January 2026 before reaching the current level. That is a steep climb in less than 18 months.

Yet the upload boom has not translated into real listening. Deezer says AI-generated music accounts for only 1% to 3% of streams on its platform, and that as much as 85% of those plays are flagged as fraudulent and removed from royalty calculations. The company says that matters because fake streams can divert money away from legitimate artists and inflate the apparent popularity of low-value content.

The broader industry is beginning to respond. Deezer introduced its AI tagging system in June 2025, later said it had identified more than 13.4 million AI-generated tracks during 2025, and then made its detection technology available for licensing in January 2026. It also says AI-made tracks are excluded from recommendations, while high-resolution storage for such files has been scaled back.

Other platforms and companies are taking different approaches. Deezer says Qobuz has built its own detection tool, Apple Music has introduced transparency labels that rely on labels and distributors to declare AI use, and Spotify has backed the DDEX standard for disclosure while testing a credits feature for AI-related contributions. Separately, Deezer says licensing deals with groups including Sacem and Hungary’s EJI show that detection tools are moving from internal defence to commercial infrastructure.

For Deezer, the issue is not AI-assisted music itself but deception. The company said a study it commissioned found most listeners could not reliably tell AI music from human-made tracks, while a large majority wanted it clearly labelled. That points to a market where synthetic music may be accepted, so long as listeners know what they are hearing and rights holders are protected.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2]

- Paragraph 2: [3], [5], [7], [4]

- Paragraph 3: [2], [4]

- Paragraph 4: [6], [4], [5]

- Paragraph 5: [4]

- Paragraph 6: [2]

Source: Noah Wire Services