Newsroom leaders keep making the same mistake with artificial intelligence: they treat a technology decision as if it were enough on its own. The result is a series of clumsy roll-outs that raise suspicion instead of confidence, even among journalists who are broadly open to using AI in useful, limited ways.

The latest backlash has centred on the Cleveland Plain Dealer and its digital arm, Cleveland.com. According to reports from local outlets, the newsroom has used AI to help identify story ideas in nearby counties and to draft stories from reporters’ notes, part of an effort to stretch coverage into places that no longer have full reporting teams. But the reaction has been especially hostile to the more theatrical experiments, including AI-generated vertical videos featuring a talking building and avatars of newsroom staff. In one case, a prospective reporter reportedly withdrew from consideration over the paper’s use of AI, underscoring how quickly trust can evaporate when the technology is not explained clearly.

McClatchy has faced a different kind of revolt. The company has been using what it calls a "content scaling agent" to recast stories in formats aimed at different audiences, including summaries and short-form video scripts. The Wrap reported that journalists were alarmed to learn their bylines might be attached to AI-produced material, while union representatives at McClatchy publications have said the tool raises contractual and ethical concerns. One news report said some Sacramento Bee journalists have refused to let their names be used on material generated by the system, describing the move as a breach of public trust.

There is a valid argument for using automation to widen the reach of good journalism. That is especially true in local news, where shrinking staffs have left many communities under-covered. But the execution described in these cases has been so poorly handled that it risks poisoning the broader debate. Instead of creating a careful model for human-led AI assistance, these roll-outs have given critics fresh evidence that newsroom leaders are improvising in public.

The most telling failures are organisational, not technical. As Poynter has argued, newsroom executives often skip the hard work of defining a real problem, speaking to audiences before launch and bringing sceptical staff into the process early. Sitara Nieves, Poynter’s vice president of teaching and organisational strategy, said leaders who do not consult widely before introducing tools that change how people work end up spending far more time repairing the damage later. Kristen Hare, a Poynter faculty member, put it more bluntly: journalists will probe weak ideas until they collapse.

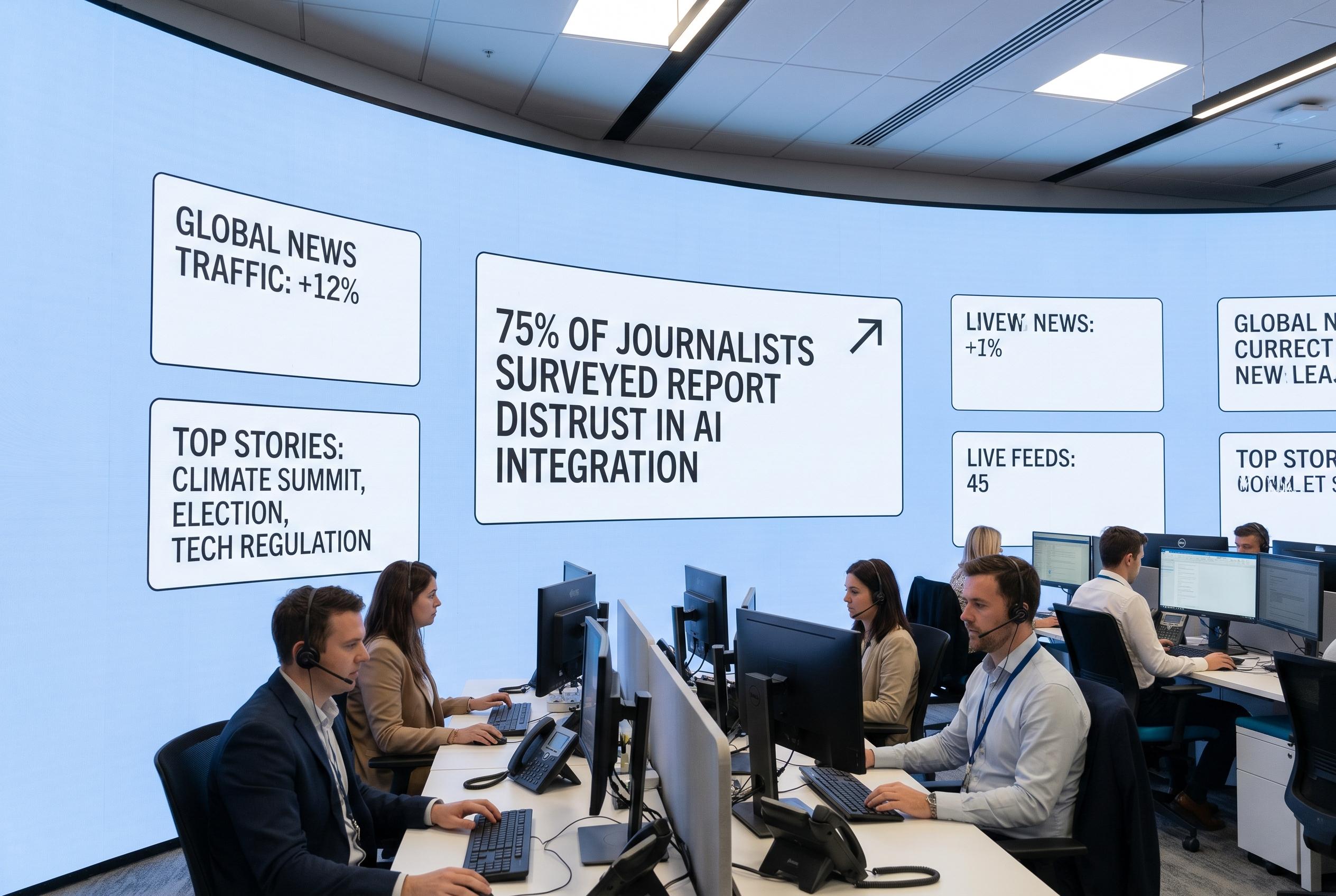

Transparency is part of the answer, even if it is uncomfortable. People want to know when AI is involved, yet disclosure can also trigger suspicion, especially in a climate where many readers are already wary. Still, hiding the extent of the technology is worse. If organisations want audiences to accept AI as a limited newsroom aid rather than a substitute for reporting, they need to explain exactly what it does, what humans still control and why it is being used at all.

For now, the industry’s lesson is less about machine learning than leadership. Newsrooms may be able to use AI to support reporting, summarise work or expand coverage, but only if they stop presenting it like a spectacle. The aim should not be to dazzle staff and readers with futuristic branding, but to show that the technology is boring, supervised and genuinely useful.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [3]

- Paragraph 2: [2], [6]

- Paragraph 3: [3], [4], [5], [7]

- Paragraph 4: [1], [2], [3], [4], [5], [6], [7]

- Paragraph 5: [1]

- Paragraph 6: [1], [2], [3], [4], [5], [6], [7]

Source: Noah Wire Services