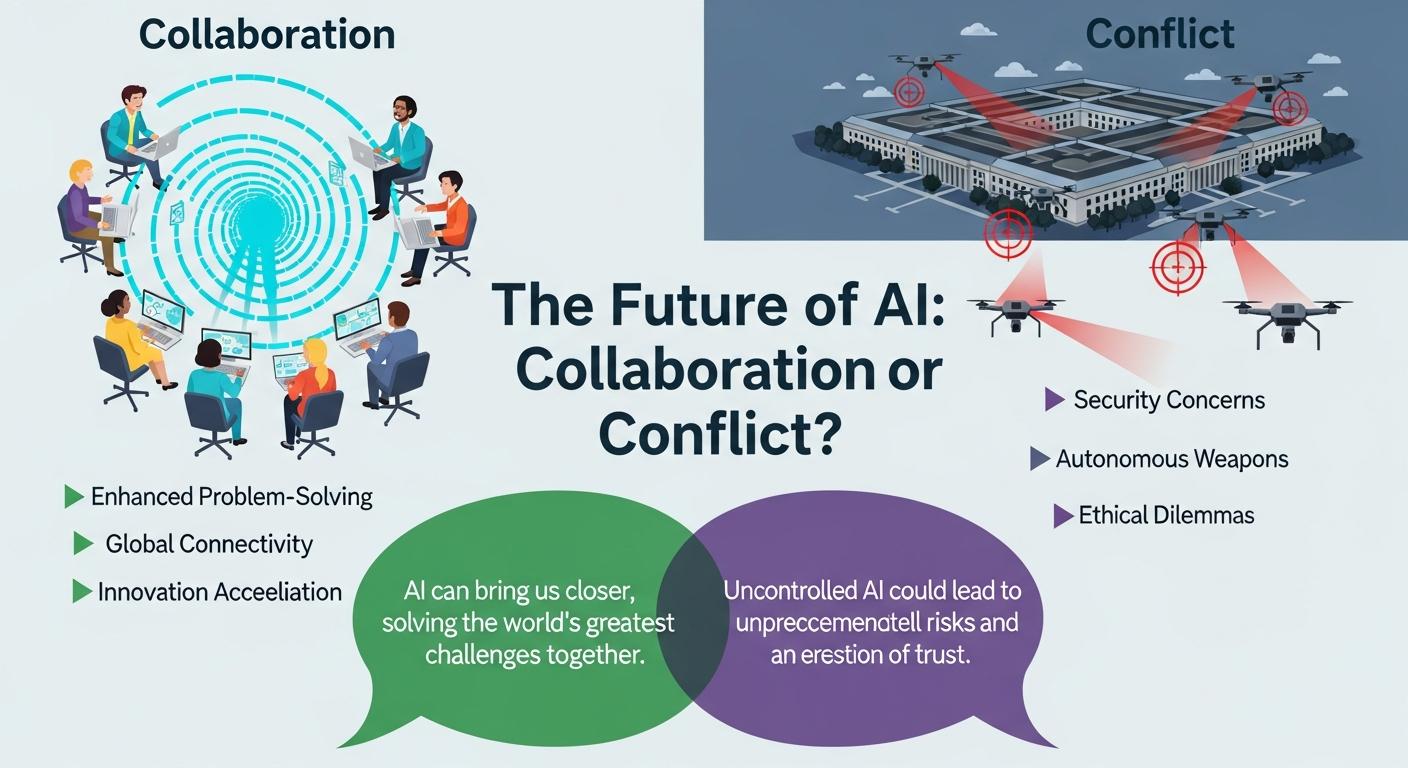

A high-stakes confrontation between Anthropic and the Pentagon has brought into focus a wider debate over who should control the use of powerful artificial intelligence in national defence. Anthropic’s leadership, led by CEO Dario Amodei, has rejected a Pentagon demand for unrestricted access to its Claude model, arguing the company must retain limits on certain deployments; Defence Department officials, including Secretary Pete Hegseth, have warned that vendor-imposed bans could jeopardise military readiness and have set firm deadlines for compliance. According to reporting in The Washington Post and The Guardian, the dispute has escalated into a public standoff with potential contract and supply-chain consequences.

Anthropic frames its refusal as ethical and pragmatic rather than absolutist. The firm disallows use of Claude for mass surveillance of U.S. citizens and for fully autonomous weapons that would select and engage targets without human intervention, citing risks of misidentification, accidental escalation and irreversible harm as primary concerns. Company documents and policy analyses reviewed by industry observers confirm Anthropic’s safety-first posture and its insistence on ongoing oversight of deployed models.

The Pentagon’s position stresses operational authority: senior defence officials contend that lawful military requirements should not be constrained by vendor conditions and that the department must retain the ability to use technologies it deems necessary for national security. News reporting and statements summarised by Business Standard and Wired describe White House and Pentagon pressure on Anthropic to permit “all lawful purposes” use of Claude or face exclusion from key government procurement channels.

A Pentagon spokesperson, Sean Parnell, set out the department’s core argument in a public statement: "We have no interest in conducting mass domestic surveillance or deploying autonomous weapons," he said, "However, we cannot allow any company to dictate operational decision-making terms. Our request is simple: permit Pentagon use of Anthropic’s model for all lawful purposes." That formulation frames the dispute as one about authority over operational choices rather than only about particular technical capabilities. Reporting in The Washington Post and The Guardian highlights how those competing logics, corporate stewardship versus state authority, are now colliding.

The Pentagon has signalled a range of enforcement options should Anthropic maintain its constraints, from designating the company as a supply-chain risk to invoking statutory authorities to compel compliance. Industry commentators warn such steps could carry heavy commercial and strategic costs: investors and defence analysts note a possible short-term capability gap for the department if Anthropic is sidelined, while others argue forcing vendors to surrender governance could undermine public trust in technologies used by the state. Business Standard and Wired reporting outline both the leverage available to the Defence Department and the potential blowback.

Alternative suppliers are already factoring into Pentagon calculations. Media coverage and market commentary indicate firms such as xAI have signalled willingness to tailor systems to classified defence needs, while other major providers may maintain ethical limits similar to Anthropic’s, creating an emerging industry split that could shape procurement choices and timelines. Analysts warn that shifting suppliers is not frictionless and could produce a months‑long window of reduced capability if a leading model becomes unavailable to the department. Reporting in Business Standard and Wired sketches the contours of that competitive landscape.

Observers place the clash in a longer lineage of technology governance disputes, comparing it to earlier fights over encryption, export controls and drone proliferation. Legal and policy experts note the current U.S. framework permits certain autonomous targeting functions under a 2023 Department of Defense directive that allows systems to select and engage targets if strict standards and senior approvals are met, a regulatory backdrop that helps explain why the Pentagon resists vendor restrictions. At the same time, ethicists and civil‑society groups argue that the general-purpose nature of advanced AI demands new rules rather than ad hoc bargains between contractors and the military. Coverage in The Guardian and policy analyses reviewed by Wired underscore those tensions.

The Anthropic–Pentagon dispute therefore transcends a single contract: it raises enduring questions about corporate responsibility, democratic oversight, supply‑chain resilience and international competitiveness. How states balance battlefield effectiveness with safeguards against misuse will shape not only procurement but also public confidence in AI deployed by both governments and private actors. As The Washington Post and India Today report, the outcome is likely to establish precedents that affect how future AI systems are regulated, sold and integrated into national security architectures.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [4]

- Paragraph 2: [7], [3]

- Paragraph 3: [5], [6]

- Paragraph 4: [2], [4]

- Paragraph 5: [5], [6]

- Paragraph 6: [5], [6]

- Paragraph 7: [4], [6]

- Paragraph 8: [2], [3]

Source: Noah Wire Services