The recent clash between Anthropic and the Pentagon has reignited a vital debate about how democracies should govern powerful new technologies and the role local governments can play in shaping ethical deployment. According to reporting by the Associated Press, Defence Secretary Pete Hegseth demanded unrestricted access to Anthropic’s Claude system for military use; Anthropic’s chief executive, Dario Amodei, declined, explicitly rejecting applications he said would enable mass domestic surveillance and fully autonomous weapons without reliable human oversight.

That refusal prompted an immediate and sharp response from the federal apparatus. The Washington Post reported that the Pentagon gave Anthropic a Friday deadline and signalled it could invoke the Defense Production Act or designate the firm as a “supply-chain risk” if it did not comply, while stressing it reserves the right to use AI for “all lawful purposes.” The standoff demonstrates how national security priorities can collide with corporate commitments to safety.

Anthropic was founded by researchers who left another high-profile AI lab over safety concerns, and its leadership has repeatedly framed the company’s mission around restraint and human-centred controls. Tom’s Hardware highlighted Amodei’s insistence that certain uses are unethical, and that the company supports limited, human-supervised defence applications rather than systems that choose or strike targets autonomously. That principled stance has now put Anthropic at odds with a Pentagon seeking broader operational flexibility.

The dispute has practical consequences for public procurement and for citizens’ everyday choices. As one commentator in Hawaiʻi noted, subscribing to a particular AI service is a de facto civic act when suppliers differ on fundamental safeguards. The episode illustrates that vendor selection is not merely technical procurement; it is an expression of policy preferences that can align with or resist government appetites for surveillance and automation.

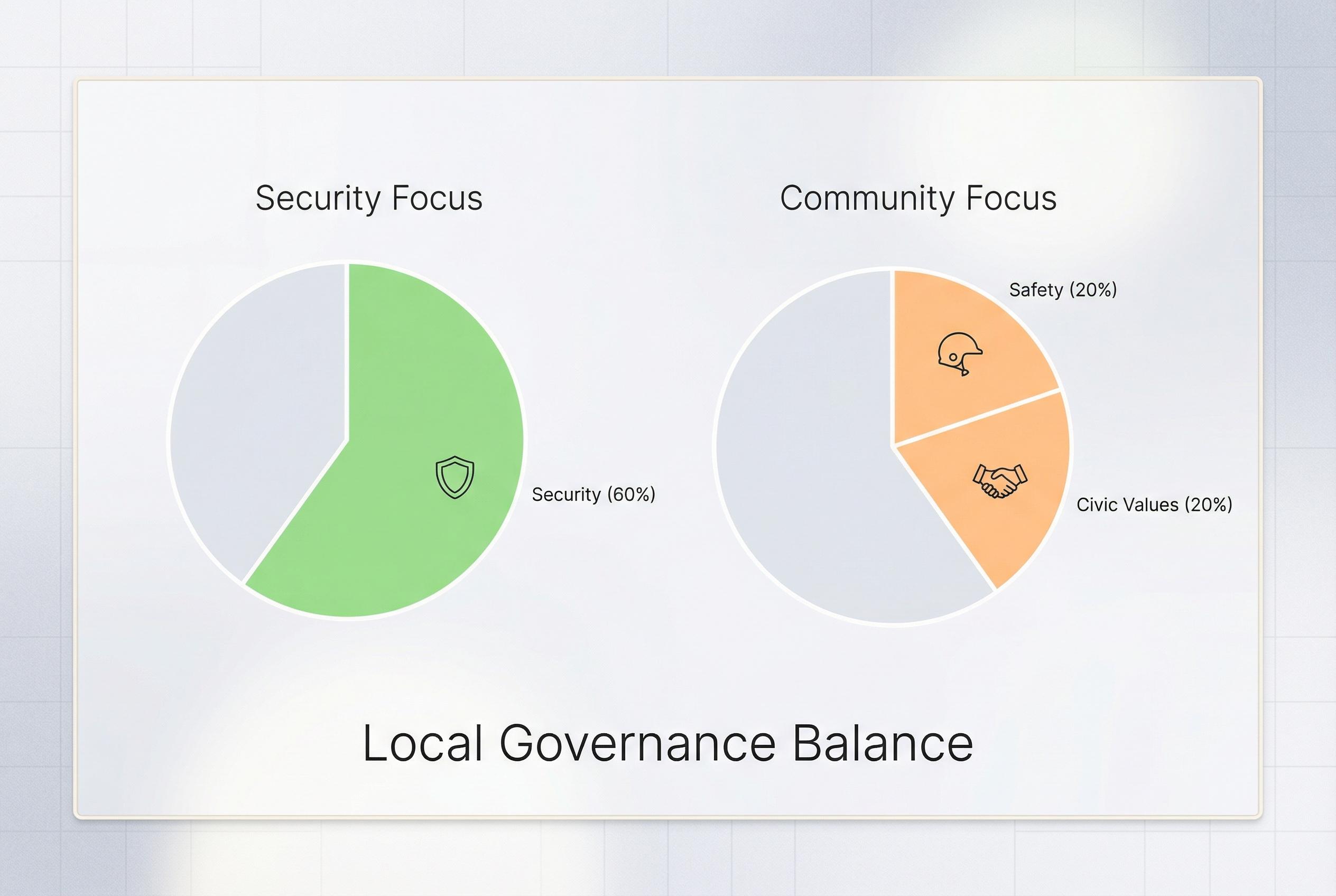

State and local governments therefore face a clear policy choice about how to adopt and regulate AI. The Associated Press has documented the Pentagon’s posture and the company’s response, offering a cautionary tale: without clear statutory guardrails, public agencies may find themselves coerced into accepting tools that contravene community standards. Legislatures can and should set criteria for which vendors are eligible for state contracts, and require “human-in-the-loop” safeguards for government use.

Practical models already exist. A recent survey of state pilot projects , exemplified by Pennsylvania’s review of ChatGPT in government operations , found efficiency gains alongside serious risks, including hallucinated legal citations and fabricated job requirements that only human review caught. Those findings suggest pragmatic rules: permit AI to augment staff but mandate human oversight, recordkeeping, and routine audits to prevent error and abuse. The Week and other outlets covering the national debate have emphasised that such safeguards enjoy bipartisan support across multiple states.

Data portability and interoperability measures offer another tool to rebalance power away from dominant platforms and give citizens greater control. Utah’s forthcoming Digital Choice Act provides a legislative template by obliging platforms to enable users to download and move their data and by requiring structural interoperability. Adopting similar protections at the state level would reduce vendor lock-in and increase accountability for how AI systems are trained and deployed. Tom’s Hardware and reporting across outlets examining the Anthropic dispute underline that structural reforms can limit the scope for misuse even when federal policy is uncertain.

The Anthropic episode is therefore a prompt for local leaders to act decisively. Consumers can express preferences through subscriptions and procurement choices; lawmakers must translate those preferences into durable rules that protect privacy, ensure human control over life-critical decisions, and preserve civic values. If Hawaiʻi and other states want to shape their digital futures, they will need binding procurement standards, data protections modelled on recent state laws, and clear operational limits on government use of generative AI. The alternative risks ceding those decisions to ad hoc federal pressure or to vendors whose commercial incentives do not align with public interest.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2]

- Paragraph 2: [6]

- Paragraph 3: [4]

- Paragraph 4: [2]

- Paragraph 5: [3], [2]

- Paragraph 6: [5], [3]

- Paragraph 7: [4], [2]

- Paragraph 8: [3], [5]

Source: Noah Wire Services