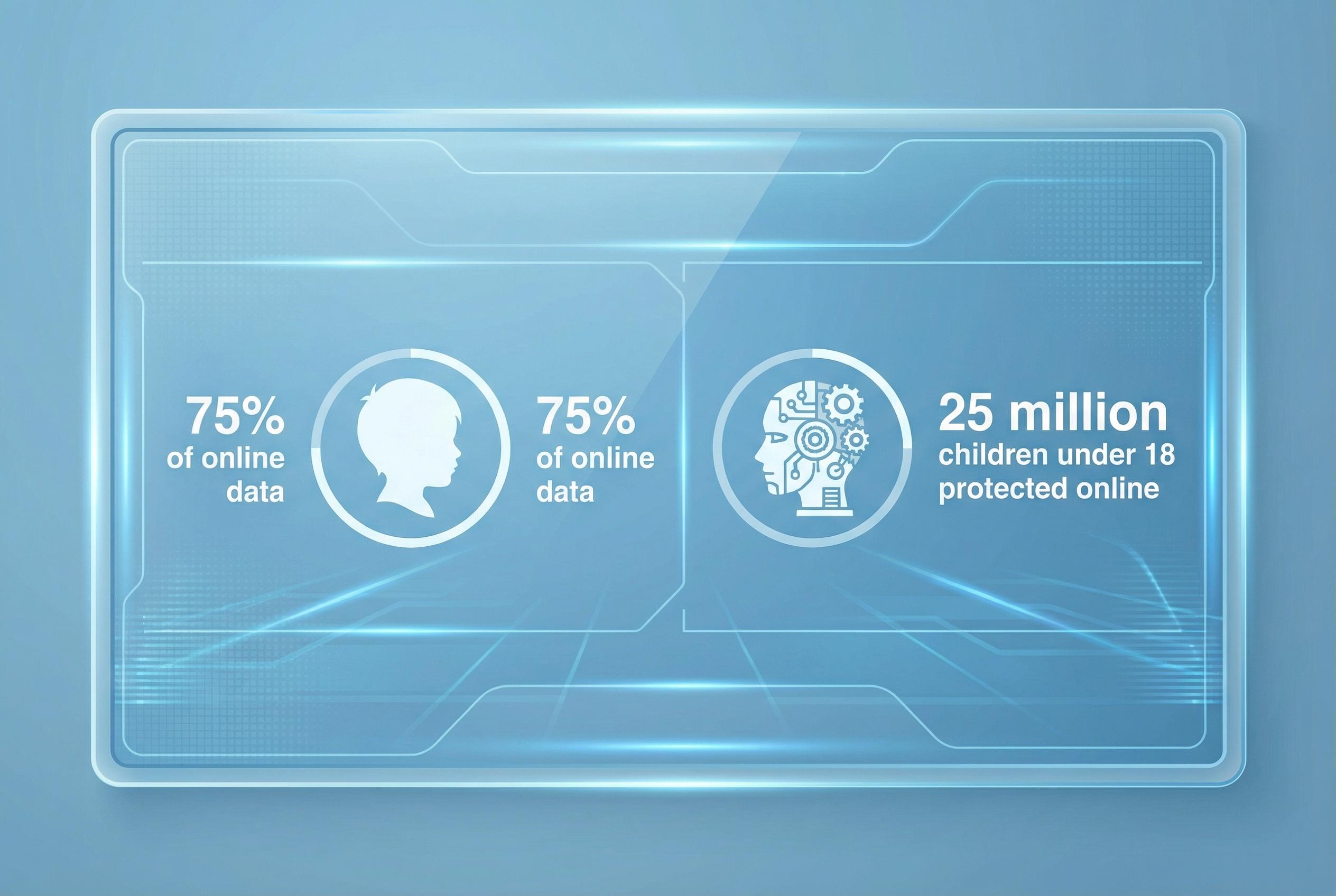

The White House’s new National AI Legislative Framework has placed the safety of children at the forefront of its proposals, signalling that the future shape of US AI policy will be judged largely by its capacity to shield younger users from harm. According to the White House, the framework’s seven thematic pillars begin with child protection and emphasise measures to empower parents while seeking to harmonise federal action across other areas such as intellectual property, free speech and workforce development.

At the heart of the US approach is a mix of platform accountability, parental tools and so-called “reasonable” age assurance measures intended to reduce explicit harms such as deepfakes, sexual exploitation and incentivisation of self-harm. The administration’s blueprint frames these elements as part of a broader, innovation-focused federal strategy that aims to preempt a patchwork of state rules.

Yet implementing these ambitions runs straight into technical and legal complexity. Industry commentators and legal advisers have warned that asking AI services to “reduce risks” without narrowly defined technical standards can create uncertainty for operators, who may face hard trade-offs between compliance, functionality and commercial viability. The framework’s preference for a lighter regulatory hand raises the prospect of litigation over ambiguous duties and of platforms adopting defensive behaviours that could curb experimentation.

Debates over age verification encapsulate the tension between effectiveness and privacy. The US text leans toward parental attestation and less intrusive verification methods, reflecting concerns about privacy and potential litigation, whereas European regulators have been more willing to explore robust technical approaches, including document checks and biometric options. UNICEF’s policy guidance on AI for children provides a rights-based counterpoint, urging systems that protect privacy, ensure fairness and support children’s wellbeing rather than relying solely on technical gates.

That trade-off is political as much as technical: tougher identity checks may be more effective at keeping underage users out of harmful interactions but also raise proportionality and discrimination questions. Legal advisers note the unresolved intersection of AI training practices and intellectual property law, a separate but related area the White House has asked Congress to address, which could further complicate regulatory design.

The blueprint’s architects argue a unified federal regime will prevent a burdensome thicket of state rules and preserve US competitiveness in AI. Critics caution that a “light-touch” stance, designed to protect innovation, risks leaving gaps in protections unless accompanied by clearer technical standards, rigorous enforcement mechanisms and support for technologies that detect and mitigate dynamic, generative harms. Media reporting highlights this balancing act between nurturing an AI sector and protecting vulnerable populations.

Experts outside government emphasise that lawmaking must be complemented by education, research and inclusive design. UNICEF and international advisers stress child-centred requirements for AI: measures should promote development and inclusion, guard against discrimination, protect data and be transparent to both children and caregivers. Voices from other jurisdictions warn of the long-term behavioural consequences of children growing up with personalised AI companions, and call for longitudinal study and policy responses tailored to developmental impacts.

Ultimately, shielding children from AI-related harms will demand more than statutory exhortations. The success of the White House framework will depend on how Congress translates high-level principles into enforceable standards, how platforms balance parental controls and privacy, and how societies invest in digital literacy and child-centred design. The central question is not merely whether rules exist, but whether they help build a digital environment that supports the next generation’s safety, rights and flourishing.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [4]

- Paragraph 2: [2], [5]

- Paragraph 3: [5], [6]

- Paragraph 4: [1], [3]

- Paragraph 5: [5], [2]

- Paragraph 6: [5], [6]

- Paragraph 7: [3], [7]

- Paragraph 8: [1], [4]

Source: Noah Wire Services