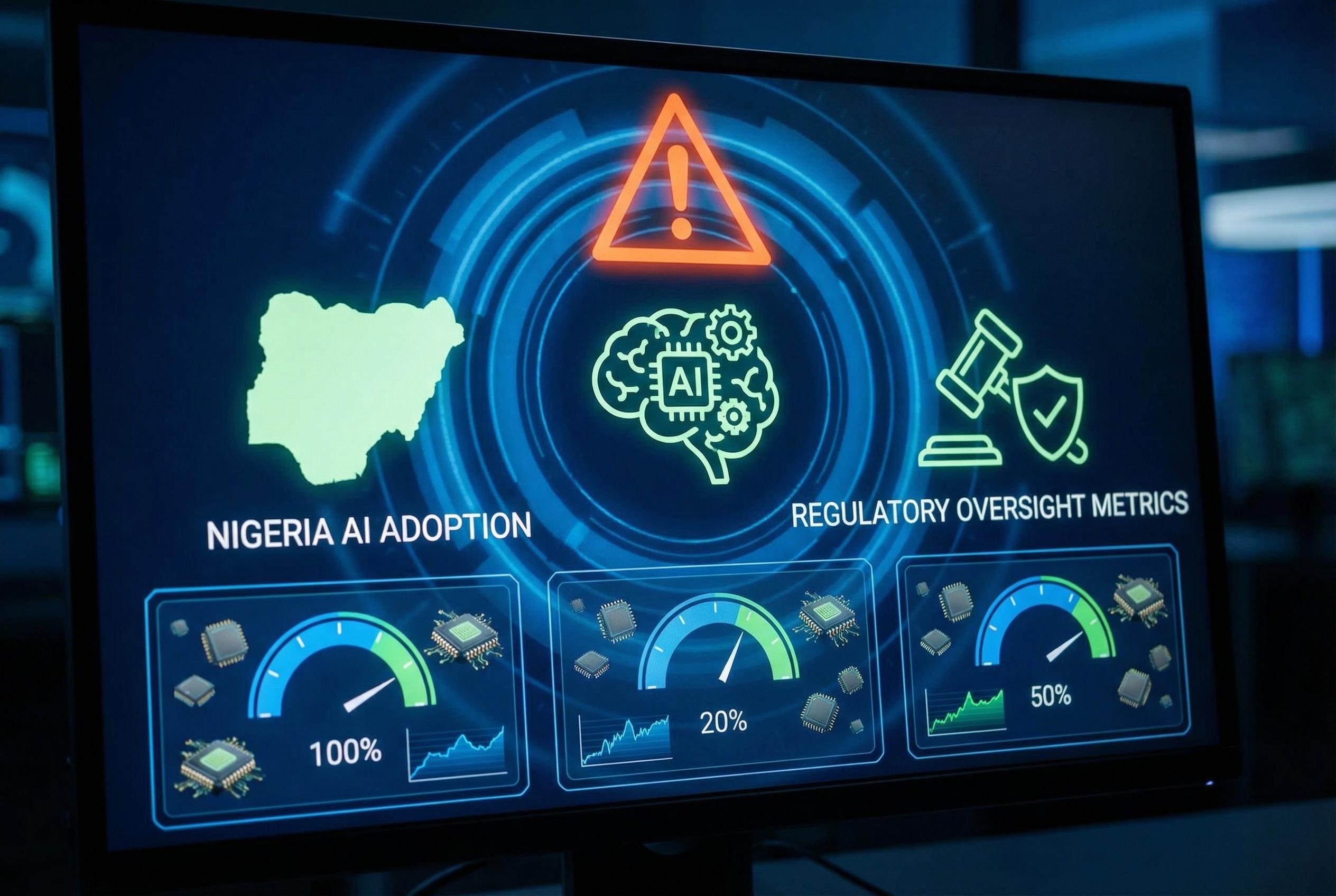

In boardrooms across Nigeria, talk of “transformation” has become routine while the concrete implications of artificial intelligence on governance remain largely unexamined. Industry surveys show near-ubiquitous corporate adoption of AI, with many firms moving beyond pilot projects into organisation-wide use and strengthening privacy teams and budgets as they scale. According to a Zoho study, adoption rates among Nigerian companies are exceptionally high and the majority have bolstered privacy capability in response. (Inspired by headline at: [1])

That rapid uptake has outpaced the development of formal oversight. Reports from professional services firms and governance commentators warn that AI is now a strategic risk that can amplify existing weaknesses in controls, and that regulators are increasingly attuned to those gaps. Kreston Pedabo’s analysis concludes that automated decision-making exposes firms to novel operational, legal and reputational hazards if left unmanaged.

Regulatory momentum is closing the window for voluntary compliance. Although Nigeria lacks a single comprehensive AI law, government and regulatory bodies have been active: NITDA’s National Artificial Intelligence Policy work, a federal white paper and draft national strategies have signposted future frameworks, while sector regulators are issuing guidance that effectively mandates technology-driven controls in specific contexts. Legal advisers tracking global frameworks note this trend as part of a broader convergence toward enforceable AI oversight.

The financial sector illustrates how adoption has translated into regulatory expectation. Central bank reports and fintech reviews show extensive use of AI for fraud detection, credit assessment and customer service; recent central bank guidance requires automated monitoring systems and advanced analytics for anti-money-laundering, creating concrete compliance timetables for banks and payment firms. That pattern , where supervisors compel technology-enabled controls , signals that passive governance is no longer an option for regulated firms.

Boards face three recurrent implementation gaps that amplify institutional risk. External analyses and governance practitioners point to inadequate AI-specific policies, weak ongoing scrutiny of third-party suppliers and underpowered whistleblowing channels. Even where organisations have formal charters, independent commentators and advisory firms warn that controls become meaningless without active oversight, continuous vendor due diligence and credible, independently managed speak-up mechanisms.

Third-party risk is particularly salient in Nigeria’s context, where vendor capability or integrity can change rapidly. Professional services guidance stresses that initial onboarding checks must be supplemented by continuous monitoring of partners’ operational resilience and model stewardship; failure to do so leaves the contracting organisation exposed to regulatory fallout and reputational damage when a supplier’s AI system underperforms or breaches data protections.

Ethics and fairness are central to credible AI governance. PwC Nigeria and KPMG Nigeria have highlighted how biased models and opaque decision-making erode trust and can entrench social inequalities; both firms argue for transparency, fairness testing and explicit accountability lines. Practical measures recommended include routine bias and accuracy assessments, clear allocation of executive responsibility for AI risk, and board-level reporting on system performance and harms.

The innovation ecosystem and public sector readiness present a further governance mismatch. Commentators on Nigeria’s AI startup scene note vigorous commercial experimentation, but also observe that state institutions often lack capacity to evaluate, adopt or scale domestic solutions responsibly. That gap has implications for public service delivery and for the country’s standing in international AI diplomacy if governance cannot keep pace with technical progress.

Closing the governance gap will require both structural and cultural change. Legal and advisory sources advise boards to map where AI influences decisions, mandate recent and documented governance policies, test systems for accuracy and bias , including third-party models , and designate senior executives accountable for AI risk with visibility at board level. These steps, supported by an open culture that rewards reporting and independent escalation, are presented as the baseline for responsible oversight rather than optional best practice.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [5]

- Paragraph 2: [3]

- Paragraph 3: [6], [4]

- Paragraph 4: [6], [7]

- Paragraph 5: [3], [7]

- Paragraph 6: [7], [3]

- Paragraph 7: [4], [7]

- Paragraph 8: [5], [6]

- Paragraph 9: [7], [4]

Source: Noah Wire Services