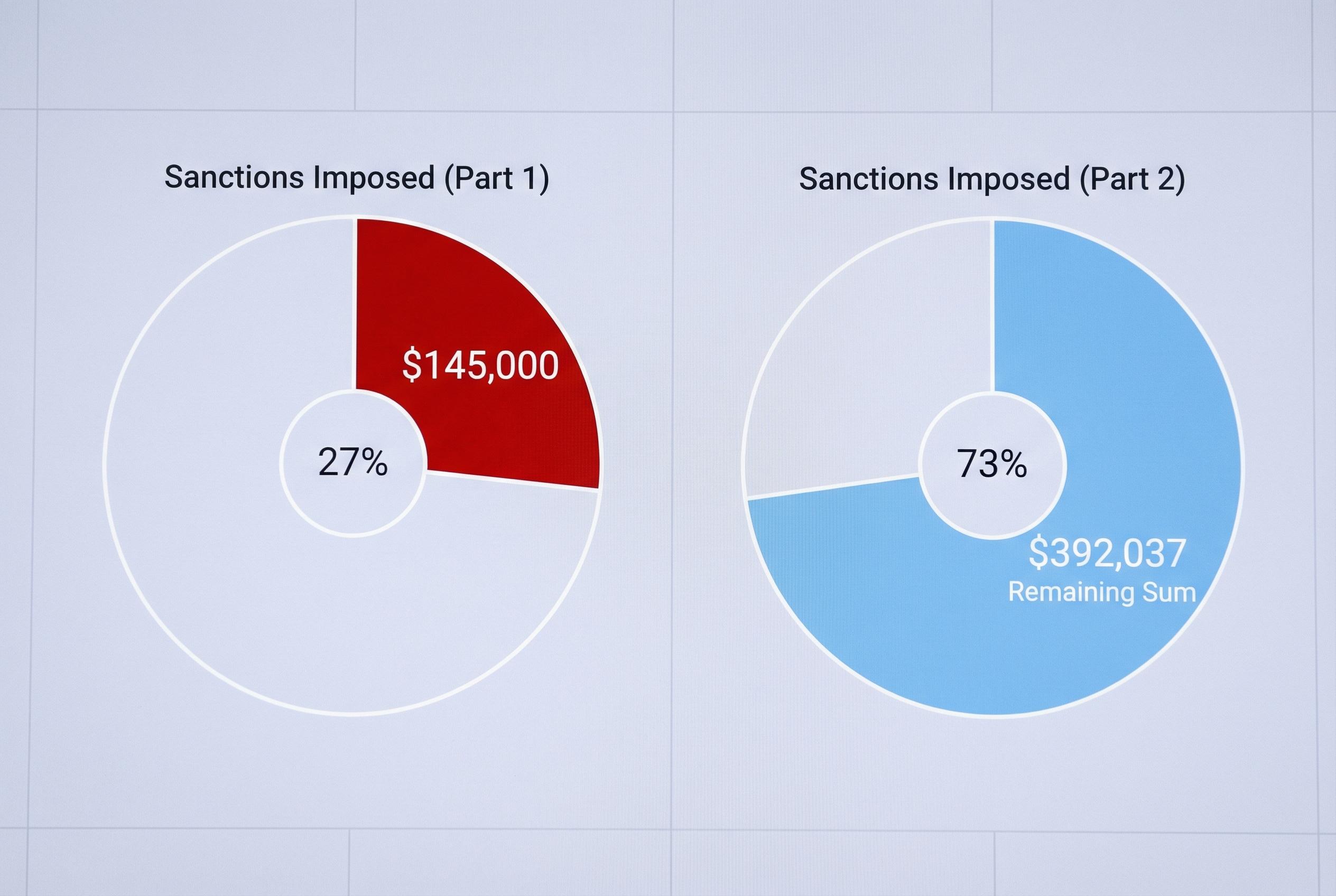

U.S. courts have moved from treating hallucinated citations as an embarrassment to treating them as sanctionable misconduct, with at least $145,000 in penalties imposed in the first quarter of 2026 alone. That tally, reported in the supplied material, includes a record-setting Oregon penalty and what appears to be the first substantial federal appellate fine tied to briefs contaminated by false authorities. It also lands at a moment when a Northwestern University survey suggests more than 60% of federal judges are using AI tools themselves, exposing a sharp divide between the standards courts demand of lawyers and the technology practices inside the judiciary.

The pace of enforcement has been striking. Sanctions reportedly stood at $5,000 in January and just $250 in February before March brought a sudden surge, including the Sixth Circuit penalty and the Oregon cases. Damien Charlotin of HEC Paris’s Smart Law Hub, who maintains a global database of AI hallucinations in legal proceedings, told NPR that the problem is now appearing with unsettling frequency, with multiple courts flagging fabricated filings on the same day.

Oregon has become the clearest example of how courts are trying to deter the conduct. According to the material, the state Court of Appeals set a per-item sanction formula in late 2025, and federal courts in Oregon have followed it. In one district case involving a winery dispute, the court found 15 fake citations and eight invented quotations across several briefs and imposed more than $15,000 on lead counsel, plus adverse costs. In another appeal, a lawyer’s filing contained at least 15 false citations and nine made-up quotations, though the court capped the punishment at $10,000 after noting new verification steps.

The Sixth Circuit took a different but equally stern approach in a Tennessee fireworks case. The court sanctioned two lawyers after finding more than two dozen citations that were wrong, misleading or nonexistent, levying $15,000 against each and ordering them to pay the appellees’ fees on appeal as well as double costs. The opinion did not expressly blame generative AI, but it made clear that lawyers must personally read and verify every authority before filing. In the supplied material, that outcome is described as the largest federal appellate sanction yet linked to fabricated citations.

The judicial side of the story complicates the picture. The Northwestern study, published in the Sedona Conference Journal, surveyed a random sample of 502 federal judges and received 112 responses. It found that 61.6% of respondents had used at least one AI tool in judicial work, most often for legal research and document review. Yet the same study found that daily or weekly use was much lower, at 22.4%, and that 45.5% of judges said their courts had provided no AI training.

That leaves the judiciary in an uneasy position. Courts are punishing lawyers for AI-assisted failures while many judges are quietly using similar tools for adjacent tasks. The result is not just a policy gap but a verification problem: if AI can be used to draft opinions, summarise records or sift research, what checking duty attaches to the judge’s own workflow? The supplied material suggests no court has yet answered that question directly.

The consequences extend well beyond citation errors. For law firms, e-discovery teams and information governance professionals, the same technology that can invent a case citation can also misclassify a privileged document or distort review results. That makes AI verification a governance issue, not merely a litigation one. The material also points to ABA Formal Opinion 512, which stresses confidentiality, competence, candour and supervision when lawyers use generative AI, and warns that generic consent language is not enough when client data may pass through commercial systems.

There is also a broader liability question emerging upstream. The supplied article points to a lawsuit filed by Nippon Life against OpenAI in Illinois, arguing that ChatGPT enabled improper legal filings and caused substantial defence costs. Whatever becomes of that case, it hints at a future in which responsibility may not stop with the lawyer who presses submit, but may reach the developer whose tool produced the faulty draft.

For now, the message from the courts is plain: AI may be useful, but it is not a substitute for checking. The sanctions data from early 2026 suggest that judges are no longer content with warnings and one-off rebukes. They are quantifying the harm, pricing the risk and signalling that every AI-assisted filing must be reviewed as if the career consequences depend on it. In practical terms, for lawyers and legal operations teams alike, they do.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [3]

- Paragraph 2: [2], [3]

- Paragraph 3: [2]

- Paragraph 4: [2]

- Paragraph 5: [3], [4]

- Paragraph 6: [3]

- Paragraph 7: [2]

- Paragraph 8: [2]

Source: Noah Wire Services