AI-assisted code submissions are dividing open-source projects, but the argument in CPAN has a different edge. Some maintainers treat machine-assisted pull requests as an automatic red flag, much as QEMU, NetBSD and Gentoo have moved to exclude AI-generated contributions altogether. Yet the deeper issue is not only whether code is good enough to merge. It is whether the person submitting it has the right to licence it in the first place.

That distinction matters because CPAN has never operated like a tightly controlled rights-clearing house. The Perl Foundation’s licensing guidance asks authors to include clear copyright notices, list differing ownership where relevant, and add licence metadata to package files, but the system still depends heavily on trust and self-declaration. There is no blanket contributor agreement, and no central mechanism that proves a submitter owns every line they upload.

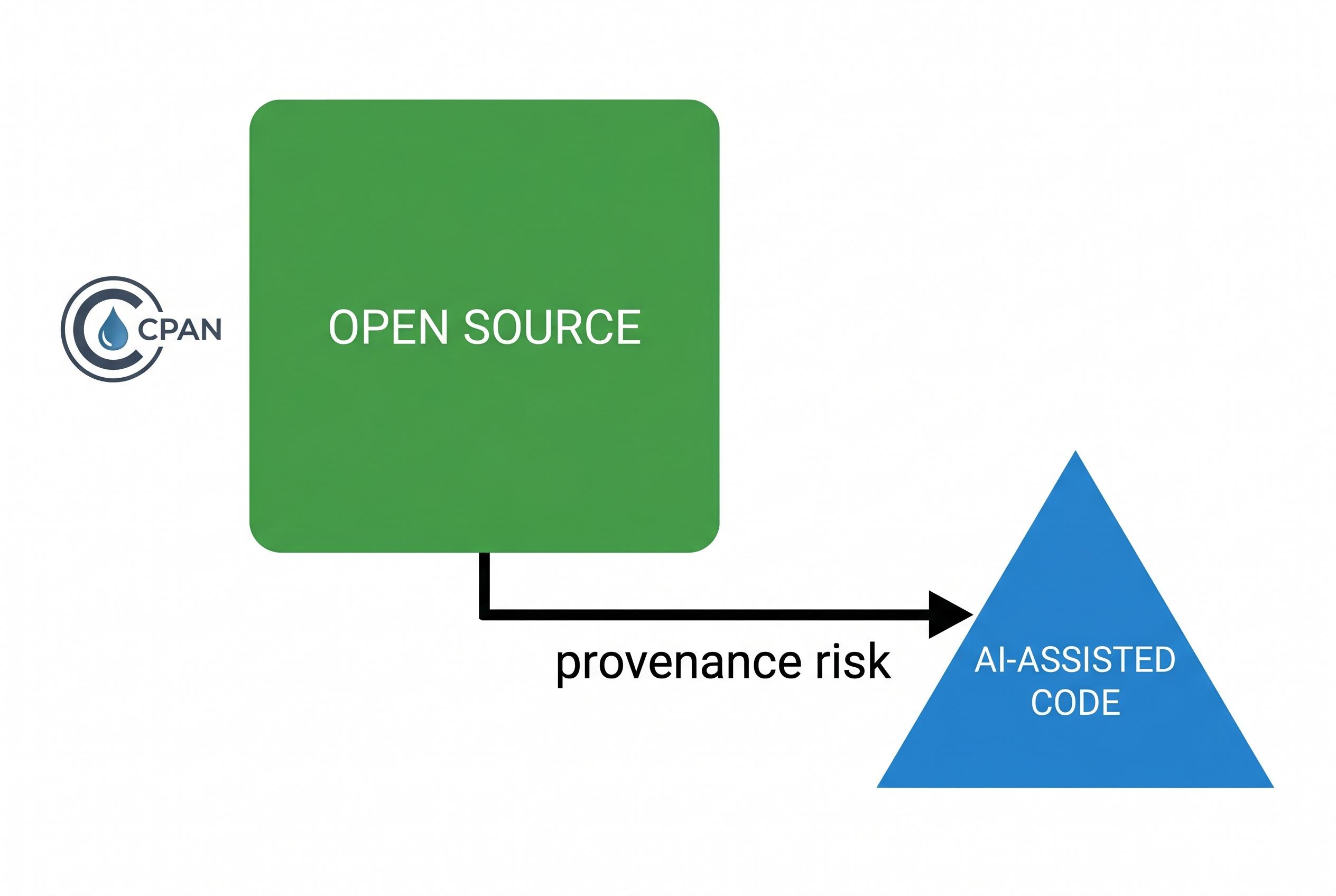

That is the point at which AI becomes less novel than it first appears. The copyright worries now attached to machine-assisted code , unseen training material surfacing in output, uncertain ownership of the generated text, and the possibility that the contributor cannot truly licence what they hand over , resemble longstanding risks in the CPAN ecosystem. Modules have long been exposed to code written under employer ownership, copied from books or reference implementations, ported from elsewhere without clear provenance, or inherited from abandoned projects whose rights holders are hard to trace.

Seen that way, the debate is not between a pristine human-only past and a contaminated AI future. It is between different forms of provenance uncertainty. The Register reported that Gentoo’s council unanimously backed its ban on AI-generated code in April 2024, and later noted that NetBSD had also drawn a line against such contributions, reflecting a broader open-source caution over quality, copyright and ethics. But CPAN’s licensing model already assumes a degree of trust that makes a categorical ban harder to justify as a legal response.

The practical difference AI introduces is volume. A maintainer using modern tooling can generate far more patches, faster, which raises the odds that one will contain borrowed or unlicensable material. That does not make AI contributions uniquely dangerous; it simply means the same review habits need to be applied more often. A sensible response is disclosure, human accountability and basic provenance checks, alongside the ordinary code review already expected of any submission.

In that sense, the legal question is less about whether AI was involved than whether a human with authority took responsibility for the licence grant. The Perl Foundation’s guidance still points toward clear notices, consistent warranty disclaimers and accurate metadata, while the wider open-source debate increasingly favours human sign-off over machine authorship claims. For CPAN maintainers, the conclusion is uncomfortable but straightforward: AI does not create a new category of copyright risk so much as it amplifies an old one.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [3], [4]

- Paragraph 2: [2]

- Paragraph 3: [2]

- Paragraph 4: [3], [4]

- Paragraph 5: [2]

- Paragraph 6: [2], [3], [4]

Source: Noah Wire Services