Canadian courts have spent the past two years trying to contain a problem that increasingly looks less like a one-off drafting mistake and more like a structural fault in the way artificial intelligence is being used in legal work. Judges have identified fabricated citations from the bench, tribunals have issued guidance requiring parties to disclose AI use, and lawyers have faced costs orders and fines after filing non-existent authorities. Yet the core question remains largely unanswered: why are the makers of the tools that generate these inventions still standing outside the frame? According to a study by Courtready.ca, the scale of the issue has grown sharply across Canadian courts and tribunals.

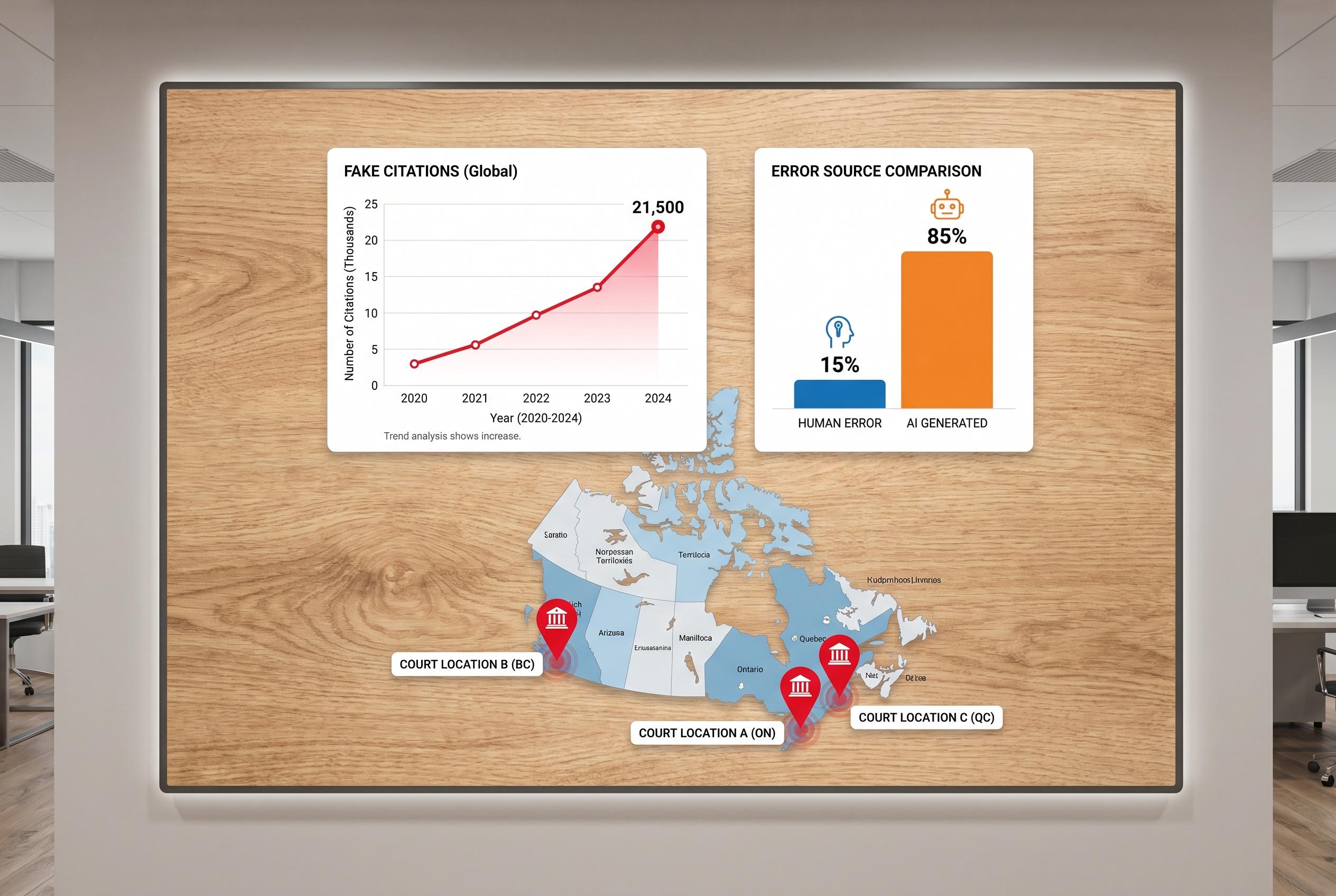

Courtready.ca said it found at least 211 fictitious case citations in 111 decisions between January 2024 and March 2026, while the National Magazine article put the figure at 249 citations across 138 decisions to April 13, 2026. Both accounts point in the same direction: the numbers have risen quickly, from a handful of cases in 2024 to a much larger volume in 2025 and a still-growing tally in 2026. The Courtready study said that in most of the decisions where the source of the false citation could be identified or inferred, AI tools were believed to be responsible.

The reason is straightforward. Most public generative AI systems do not actually consult a legal database when asked for case law; instead, they assemble something that looks like a citation from patterns in the data they were trained on. That makes the output superficially convincing but unreliable in substance. The National Magazine article argues that this creates a particular hazard for self-represented litigants, who made up the large majority of the cases reviewed. For someone with no legal background, a polished list of authorities from a chatbot can look authoritative even when it is wholly invented.

The problem is no longer limited to parties and their filings. In March 2026, La Presse reported on a Quebec Superior Court decision that appeared to contain fictitious citations in the court’s own reasons, although it has not been established that AI was responsible. That possibility matters because it suggests the issue may be escaping the safeguards built to catch it before it reaches judgment. Courtready.ca has responded by building a database of hallucinated citations and a checking tool called CaseCheck, reflecting a broader shift from warnings to practical verification. But the deeper policy question, as the National Magazine piece argues, is whether courts should continue treating this as a mistake made by users alone, or whether AI vendors should face scrutiny for products that can manufacture legal authority at scale.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [3]

- Paragraph 2: [1], [2], [3]

- Paragraph 3: [1], [2], [3]

- Paragraph 4: [1], [4], [5]

Source: Noah Wire Services