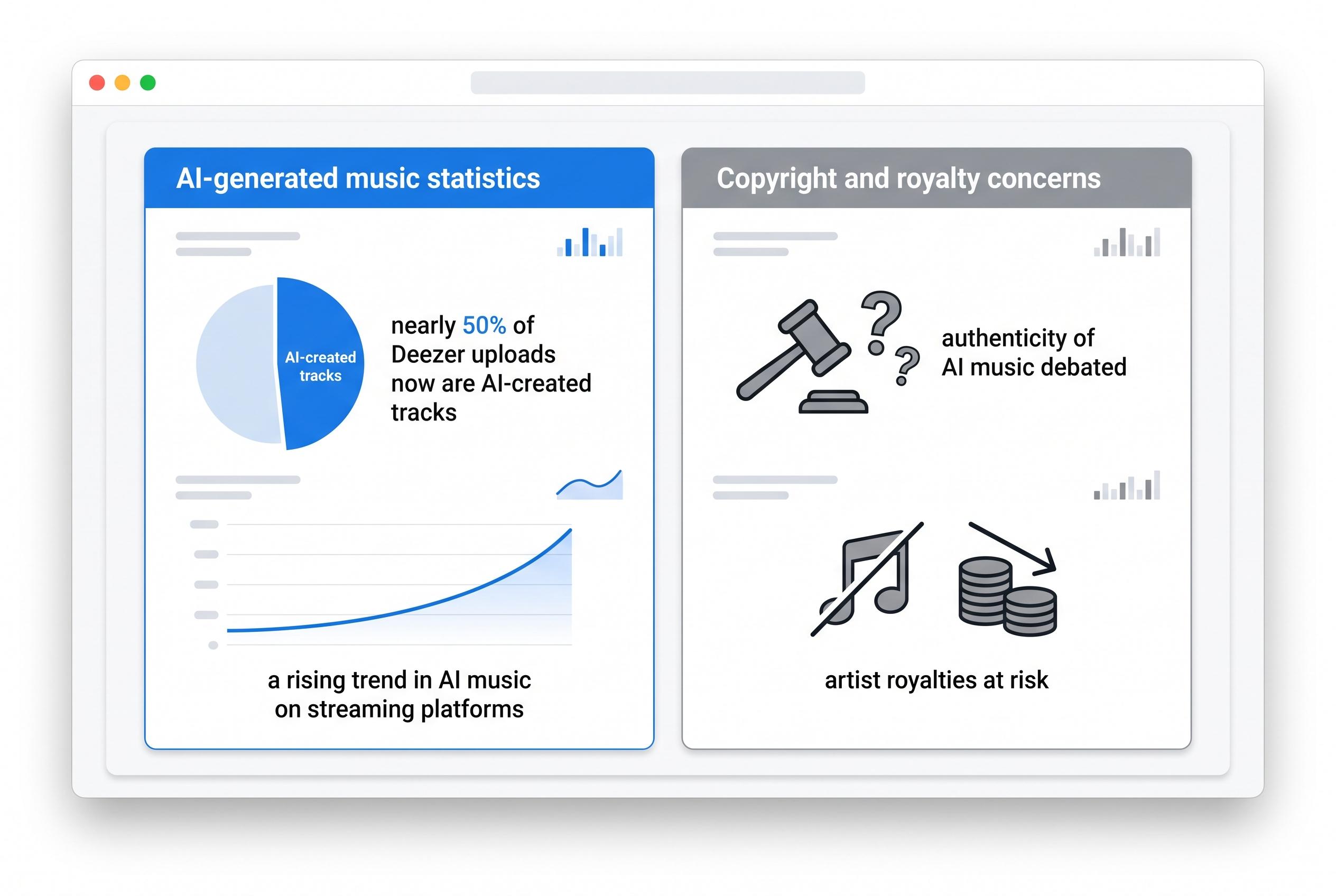

The music industry is grappling with an explosion of AI-generated tracks that is beginning to reshape streaming platforms, royalty systems and the very idea of authorship. Deezer, the French streaming service, said it is now seeing about 75,000 fully AI-made songs uploaded every day, equivalent to roughly 44% of all daily submissions, a sharp rise from earlier in 2025. According to company figures, its detection system has identified more than 13.4 million AI tracks since January 2025.

That surge has turned what once seemed like a novelty into a scale problem. Deezer says only a tiny share of these uploads are actually streamed, but the flood still creates costs in storage, processing and moderation. The company has also been flagging and demonetising large volumes of suspected fraudulent content, and it has invited other streaming services to use its AI-detection tools.

The bigger concern is abuse. AI music is being used not only to generate endless disposable songs, but also to inflate streaming numbers artificially and siphon royalties away from human artists. Reuters reported last month that Michael Smith, a North Carolina man, pleaded guilty to orchestrating a long-running streaming fraud scheme built around AI-made songs and automated listening bots, a case that prosecutors said generated millions of fake plays and around US$1.2 million a year at its peak.

The impact has not been limited to anonymous rights holders. Musicians including Vancouver singer Paula Toledo and singer-songwriter Grace Mitchell have said their work was copied or re-released by others, forcing them into protracted disputes to prove ownership to streaming services. The problem is made harder by the speed of digital distribution and the ease with which bogus accounts can repost legitimate recordings under new names.

At the same time, entirely synthetic performers are crossing into the mainstream. AI-created acts such as Xania Money, SiennaRose, Eddie Dalton and IngaRose have accumulated attention online, while the AI project Breaking Rust reached the top of Billboard’s Country Digital Song Sales chart in November with "Walk My Walk". Another AI-generated track, "Celebrate Me" by IngaRose, appeared in nearly 300,000 TikTok videos and topped digital sales charts in several countries earlier this year, illustrating how quickly fabricated artists can travel across platforms.

Listener attitudes appear mixed, though perhaps more open than many musicians would like. A global survey conducted for Deezer found that 97% of respondents could not tell the difference between AI-generated and human-made music. Yet the same research suggested many listeners were uneasy about that result, and Luminate has said a substantial share of US listeners are not especially bothered by AI-made songs. That tension is helping drive a wider industry rethink, especially after Warner Music settled litigation with Suno and Udio and Universal Music also struck a deal with Udio after initially suing the company.

What began as a novelty in music software is now a structural issue for streaming. The flood of synthetic tracks is testing copyright enforcement, artist payments and audience trust all at once, leaving the business to confront a question that sounds almost old-fashioned: when you press play, who, or what, is actually performing?

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [3]

- Paragraph 2: [4], [5]

- Paragraph 3: [1]

- Paragraph 4: [1]

- Paragraph 5: [1]

- Paragraph 6: [2], [7], [1]

Source: Noah Wire Services