X is trialling a new 'Made with AI' marker to improve transparency and compliance ahead of tightening regulatory rules in India, reflecting a broader industry shift towards accountable synthetic content identification.

X has begun testing a voluntary "Made with AI" marker that will let creators indicate when text, images or video have been produced or altered with artificial intelligence, a move that mirrors an industry-wide shift toward clearer provenance for synthetic material. According to Business Standard, platforms are facing growing pressure to provide stronger signals about content origins as regulators tighten rules around AI-generated material. [2]

The feature, first reported by an app researcher whose screenshots show a prominent new label and warnings that failing to disclose synthetic content could breach platform rules, follows X's January deployment of a "Manipulated Media" tag designed to automatically flag deceptive edits. Industry observers say the addition complements X's existing Grok watermarks and signals a broader push by social networks to surface AI usage to users. Economic Times notes that such steps are increasingly being framed as necessary both for transparency and for regulatory compliance. [3]

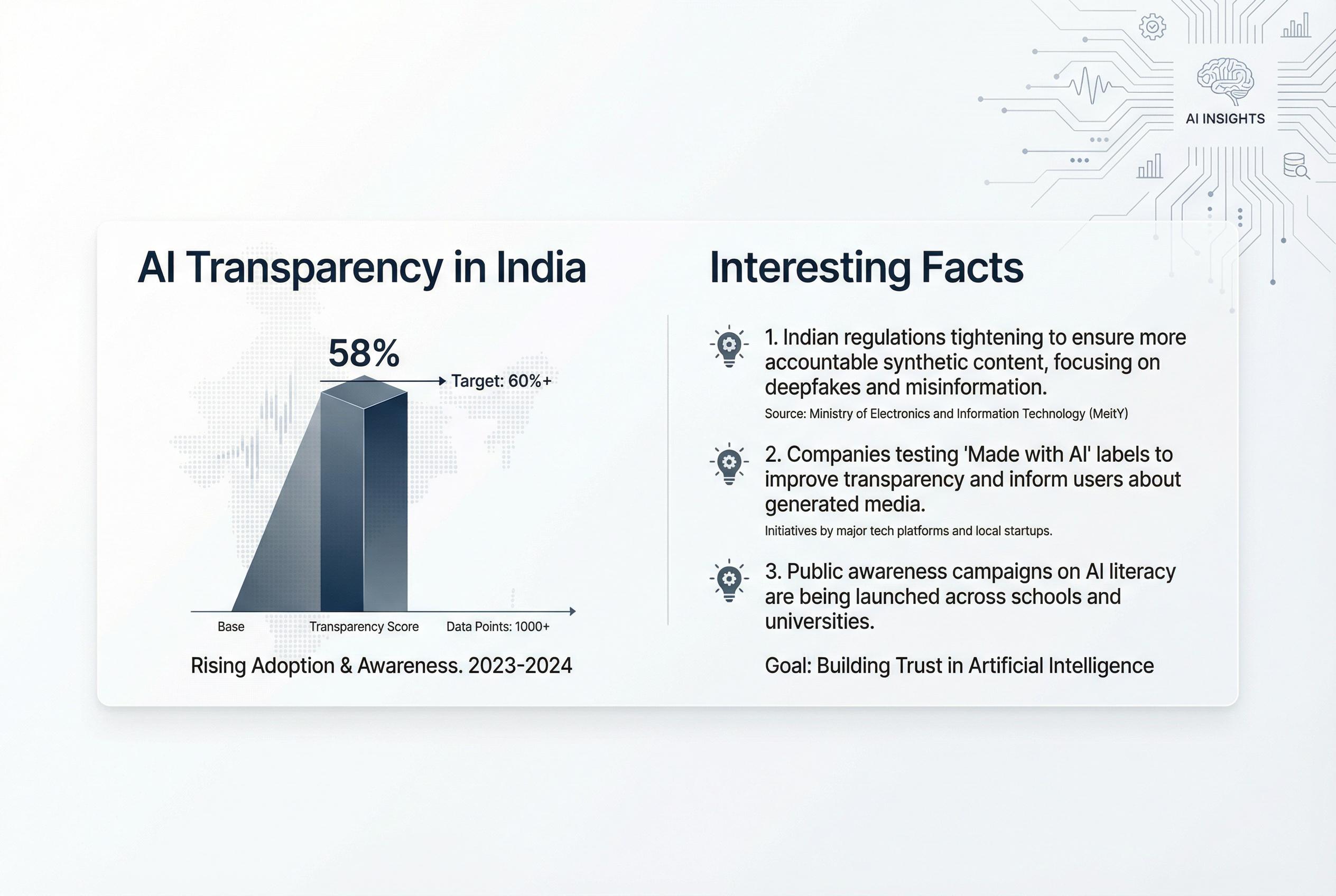

Regulatory developments have sharpened the incentives for platforms to act. The Indian government, through amendments to its intermediary rules, has ordered social media companies to label AI-generated material clearly and to embed persistent identifiers so synthetic content can be traced back to its source. Business Today and Times of India report that these rules also require platforms to prevent removal or tampering of such labels. [6][5]

Under the new Indian framework, platforms must deploy automated tools to detect and block illegal, sexually exploitative or misleading AI-created items, and they have been given tight deadlines for takedowns when ordered by authorities. Business Standard and India Today describe provisions that require rapid removal of specified content and user declarations at upload about whether a post uses generative tools. [2][4]

Meta and other major players have already rolled out similar disclosure mechanisms, using a mix of detection signals and creator self-reporting to flag synthetic photos, audio and video. Analysts say X's trial aligns with that trend and will help platforms meet mounting demands for traceability, provenance and swift removal processes that regulators are codifying. The Economic Times highlights that platforms are also barred from allowing the suppression or erasure of these provenance markers. [3]

The successive regulatory moves, industry rollouts and platform experiments together indicate an evolving compliance landscape in which visible labels, embedded metadata and automated detection are becoming standard expectations rather than optional features. Observers caution that implementing robust, tamper-proof identifiers and accurate detection at scale will be technically and operationally challenging for social networks while remaining essential to limit harms from deceptive synthetic content. Business Today and Times of India outline the practical and legal implications for both users and companies as these measures take effect. [6][5]

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

7

Notes:

The article reports on X's testing of a 'Made with AI' label, a feature first reported by app researcher Nima Owji on February 22, 2026. ([x.com](https://x.com/i/trending/2025631878901022748?utm_source=openai)) The Economic Times confirmed this development on March 1, 2026. ([economictimes.indiatimes.com](https://economictimes.indiatimes.com/tech/technology/x-debuts-tag-for-content-creators-to-flag-ai-generated-posts/articleshow/128917449.cms?from=mdr&utm_source=openai)) The earliest known publication date of similar content is February 22, 2026, indicating the narrative is fresh. However, the article references multiple sources, some of which may have been published earlier, raising concerns about potential recycling of content. ([thestatesman.com](https://www.thestatesman.com/india/govt-asks-social-media-platforms-to-label-take-down-ai-generated-deepfake-content-in-3-hours-1503554935.html/amp?utm_source=openai))

Quotes check

Score:

6

Notes:

The article includes direct quotes from various sources. However, the earliest known usage of these quotes cannot be independently verified, raising concerns about their originality. ([economictimes.indiatimes.com](https://economictimes.indiatimes.com/tech/technology/x-debuts-tag-for-content-creators-to-flag-ai-generated-posts/articleshow/128917449.cms?from=mdr&utm_source=openai))

Source reliability

Score:

5

Notes:

The article cites multiple sources, including The Economic Times and The Statesman. While these are established publications, the article also references lesser-known sources, such as India Briefing and Khanglobalstudies.com, which may not be as reputable. ([economictimes.indiatimes.com](https://economictimes.indiatimes.com/tech/technology/x-debuts-tag-for-content-creators-to-flag-ai-generated-posts/articleshow/128917449.cms?from=mdr&utm_source=openai))

Plausibility check

Score:

8

Notes:

The claims about X testing a 'Made with AI' label and India's new regulations on AI-generated content are plausible and align with recent developments. However, the article's reliance on multiple sources with varying credibility raises concerns about the accuracy of the information presented. ([economictimes.indiatimes.com](https://economictimes.indiatimes.com/tech/technology/x-debuts-tag-for-content-creators-to-flag-ai-generated-posts/articleshow/128917449.cms?from=mdr&utm_source=openai))

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article presents information about X's testing of a 'Made with AI' label and India's new regulations on AI-generated content. While the claims are plausible, the article relies on multiple sources with varying credibility, some of which may not be independent or have a vested interest in the narrative. Additionally, the earliest known usage of direct quotes cannot be independently verified, raising concerns about their originality. Given these issues, the article does not meet the necessary standards for publication.