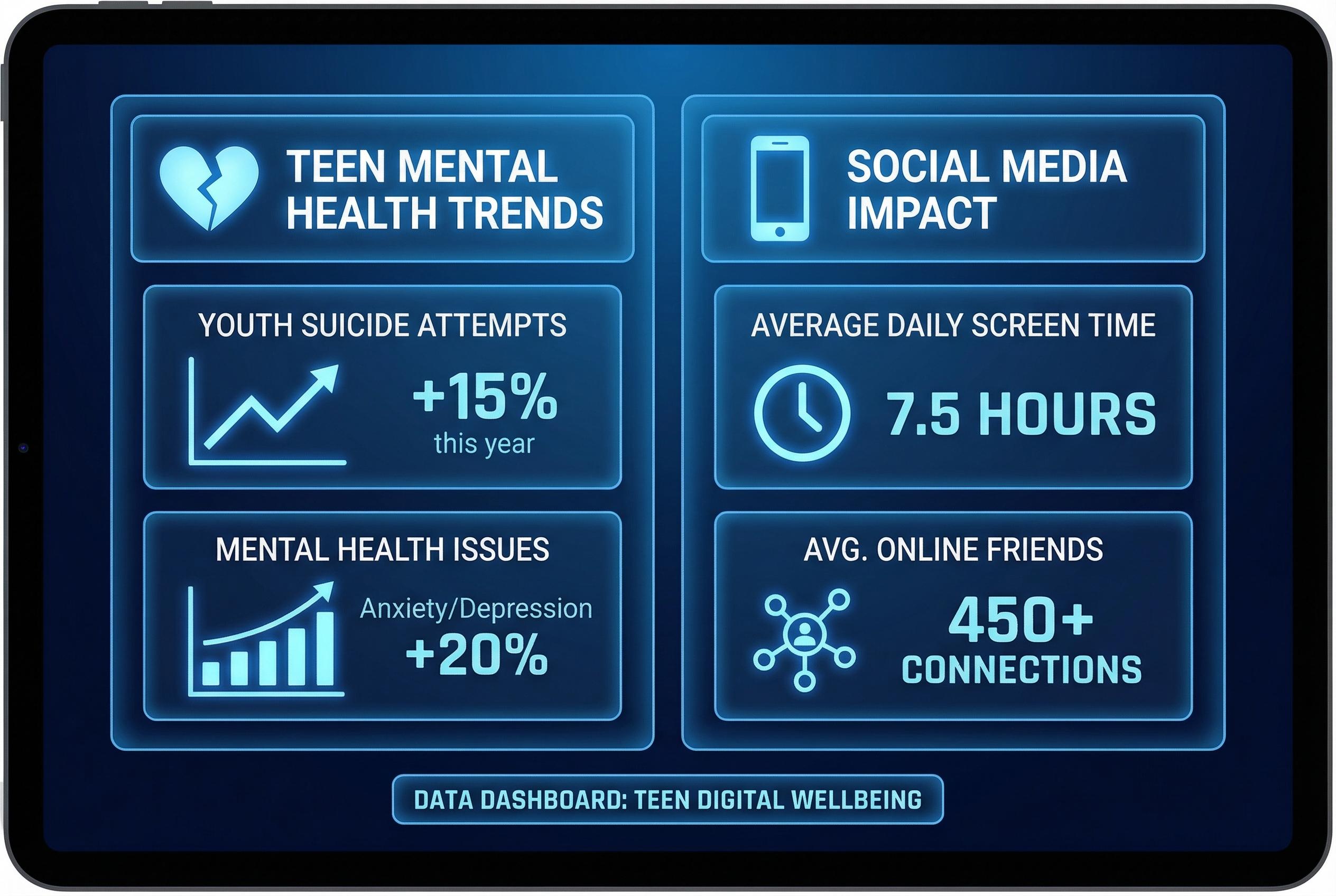

A recent tragedy in India, where three young sisters died after jumping from a high-rise when their mobile access was curtailed, has reignited debate about how deeply digital platforms are woven into adolescents’ lives and how dangerous that dependence can become. Research tracking thousands of young people over several years has reported that compulsive patterns of phone and social media use are associated with substantially greater risks of self-harm and suicidal behaviour, underscoring how acute the problem can be for vulnerable teens. (Sources: National Center for Health Research; longitudinal study reported in The Guardian).

Global surveys and health researchers have documented a complex picture: many adolescents report social media as a source of connection, yet substantial minorities describe its effects as harmful. Industry and public-health studies alike find higher rates of anxiety and depressive symptoms among frequent users, though causation is difficult to prove and other influences , such as reduced time outdoors or concurrent substance use , often co-occur with heavy online engagement. Johns Hopkins Children’s Center, for example, noted that greater social media use correlates with increased depressive symptoms while also emphasising that pre-existing depression can drive more online time. (Sources: National Center for Health Research; Johns Hopkins Children's Center).

Experts point to several interacting drivers: biological susceptibility during adolescence, platform features engineered to maximise engagement, the personalised allure of algorithmic feeds and a social environment in which much adolescent life is mediated by screens. At the same time, evidence shows harms beyond mood: disturbed sleep patterns and disturbed daily rhythms are common among young people who use devices late into the evening, amplifying risks to mental health. A sleep-specialist study found widespread use of social media in the two hours before bedtime and linked negative online interactions with both poor sleep and higher depressive symptoms. (Sources: Johns Hopkins Children's Center; study in SLEEP).

Technology firms maintain they deploy layered protections for minors, from automated moderation to parental controls, but critics and regulators argue these measures fall short. A coalition of U.S. states has accused a major social media company of intentionally building addictive features that put children at risk and of collecting data without parental consent; such claims reflect wider concern that commercial design choices prioritise time-on-platform over young users’ wellbeing. (Sources: Associated Press coverage of state lawsuit; National Center for Health Research).

The question of corporate responsibility is now being litigated in courts and debated in legislatures. Recent legal actions seek to treat platform designs as defective products, drawing analogies in public commentary to past fights over harmful consumer goods. Industry leaders counter that responsibility also rests with caregivers and that device-level parental supervision has a role to play; regulators and advocates, however, argue for stronger obligations on platforms themselves. (Sources: Associated Press; The Guardian).

Clinicians and public-health advisers urge a balanced response rooted in evidence. Reviews and advisory pieces reference the U.S. Surgeon General’s concerns and recommend attention to sleep hygiene, limits on nightly device use and closer monitoring of signs of anxiety or depression. They also stress that social media can yield social support for some young people, so policies need nuance rather than blanket prohibition. (Sources: Psychology Today commentary referencing Surgeon General advisory; National Center for Health Research).

Policymakers worldwide are experimenting with different levers: some governments are moving towards age-based restrictions and stiffer enforcement, while critics warn that simple bans may be circumvented and could drive young people toward less-regulated corners of the internet. Legal and technical hurdles , including questions about privacy-respecting age verification and the limits of national regulation of global platforms , mean there is no easy, single solution. (Sources: Associated Press; The Guardian).

What emerges from the research and recent public debate is a call for layered responses that combine improved platform design, honest corporate accountability, informed parental strategies and accessible mental-health supports. Health authorities’ guidance on limiting very early childhood screen time points to a preventive approach; for school-age children, professionals recommend family-level “digital wellness” plans that set boundaries on sleep-disrupting use, promote offline activities and ensure rapid access to help when signs of distress appear. Confronting the harms linked to excessive or compulsive online use will require coordinated action across health services, schools, families and technology companies. (Sources: Johns Hopkins Children's Center; Psychology Today).

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [6],[3]

- Paragraph 2: [3],[2]

- Paragraph 3: [2],[5]

- Paragraph 4: [4],[3]

- Paragraph 5: [4],[6]

- Paragraph 6: [7],[3]

- Paragraph 7: [4],[6]

- Paragraph 8: [2],[7]

Source: Noah Wire Services