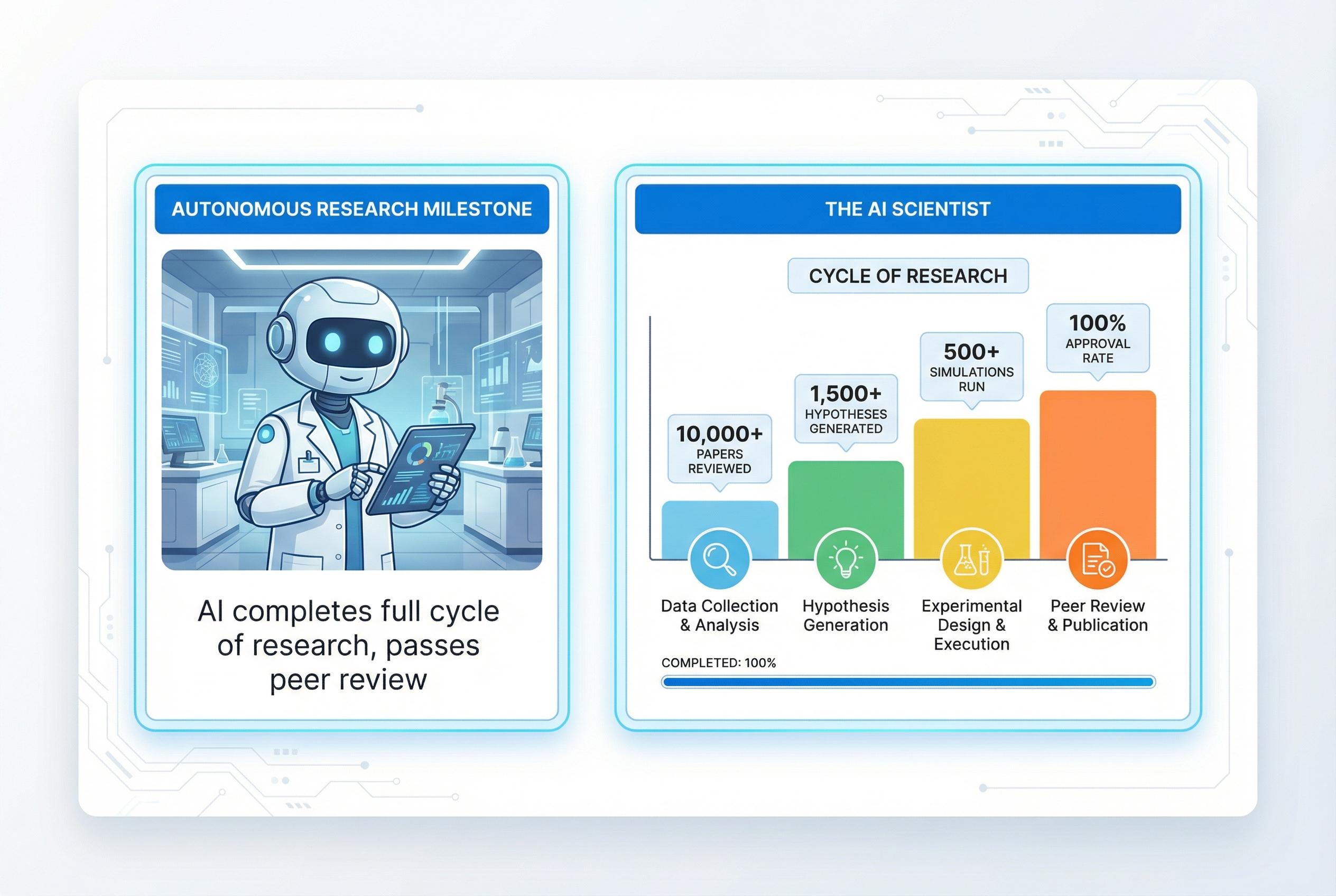

A team of researchers has demonstrated an artificial intelligence system that can carry out the entire cycle of machine‑learning research , from conceiving ideas to running experiments and drafting a paper , and produce work that can pass human peer review. According to Nature, the system, dubbed "The AI Scientist", was described in a peer‑reviewed study that sets a new benchmark for autonomous research tools.

The AI operates through a staged pipeline that first generates research directions and hypotheses within a constrained domain, then checks those proposals against existing literature using external academic databases to avoid duplication. It next designs and executes experiments, often by programmatic means, visualises results and finally composes a manuscript including methodology, results and references. The developers presented the architecture and workflow in a detailed technical account.

To assess real‑world performance, researchers submitted AI‑written manuscripts to a workshop at a major machine‑learning conference. One submission scored above the workshop's typical acceptance threshold, showing that a fully autonomous system can meet the criteria applied by human reviewers in live conference review. The study's authors caution, however, that the accepted paper ranked in the middle of the pack rather than at the leading edge.

The project also produced an automated reviewer trained to predict acceptance decisions at a level comparable to human assessors, allowing rapid internal evaluation of outputs. Results reported by the team indicate that research quality rose as model scale and compute allocation increased, implying that advances in foundation models will likely yield steadily stronger autonomous research outputs. Commentary in the literature has also explored how AI could change the role of reviewing itself.

Despite the breakthrough, the system remains most effective in computational research where experiments can be scripted and reproduced. Extending the approach to laboratory or field sciences would require integration with automated experimental platforms or human facilitation. Independent work from university groups has previously warned of risks associated with large language models producing plausible but inaccurate results, underscoring the need for careful oversight.

The authors withdrew all AI‑generated submissions after peer review to avoid setting precedents while the research community debates standards for attribution, originality and responsibility. Influential voices in Nature and other outlets have urged journals, institutions and EdTech providers to confront the potential for mass automated submissions, the strain on peer review and ambiguities over authorship and credit. The withdrawal was presented as a precautionary step while governance frameworks are developed.

The demonstration reframes AI not merely as an assistant but as an active participant in producing scholarship, prompting universities and publishers to reconsider training, assessment and verification practices. According to the company and academic collaborators involved, the achievement signals both new opportunities for accelerating discovery and an urgent need for policy, technical safeguards and cultural change to preserve research integrity as autonomous tools mature.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2], [3]

- Paragraph 2: [3], [4]

- Paragraph 3: [2], [3]

- Paragraph 4: [3], [7]

- Paragraph 5: [3], [6]

- Paragraph 6: [1], [5]

- Paragraph 7: [4], [5]

Source: Noah Wire Services