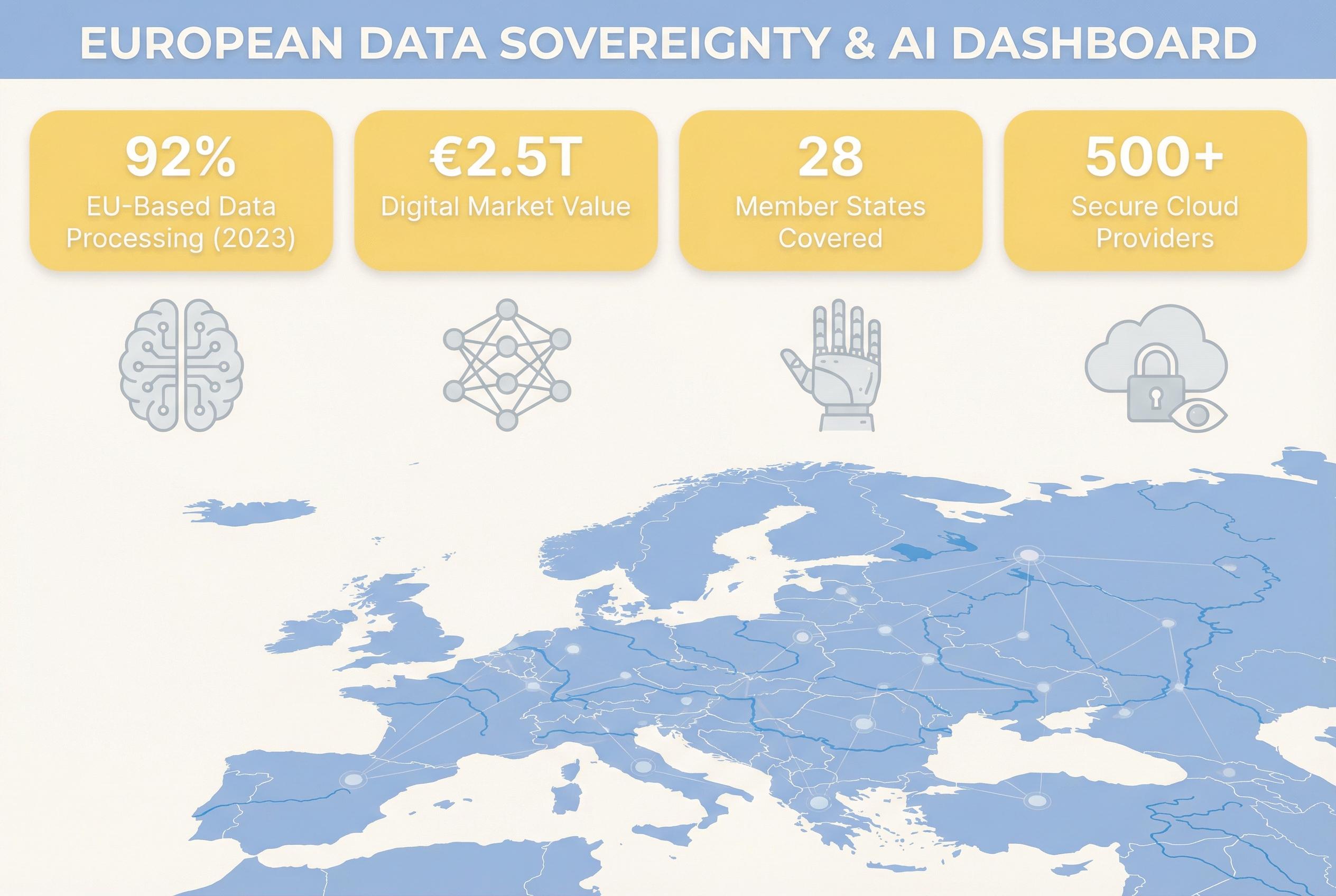

Europe’s scramble for digital sovereignty is increasingly centring on an overlooked asset: the datasets that feed artificial intelligence. As models become commoditised, control over the underlying data is emerging as the decisive commercial advantage for companies seeking durable differentiation in an AI-driven economy. According to TechRadar Pro, policymakers and businesses are already reshaping architectures to prioritise data locality, performance and compliance as foundational elements of national and corporate strategy. [2]

Countly, a product analytics platform founded in 2013 and built on an open-source, self-hosted model, positions itself as an early entrant in that shift. The company enables organisations to capture and analyse operational and product-usage datasets within their own infrastructure, a design intended to keep sensitive behavioural data from being routed through third-party services. Countly’s blog describes self-hosting and private cloud deployments as core means to eliminate vendor monetisation of analytics and to retain full custody of data. [6]

Onur Alp Soner, Countly’s CEO and co-founder, frames the matter bluntly: "Basically, our main focus is data control and data ownership. We want companies to have complete control over the data they collect , that’s why we’ve existed since day one." He traces Countly’s mission back to a time when businesses increasingly traded detailed user telemetry for free analytics and advertising models they could not control. That history, he argues, makes data ownership a strategic rather than merely regulatory concern. [1][6]

Industry analysis suggests Soner’s view is gaining traction because AI materially raises the economic value of proprietary datasets. TechRadar Pro notes that the EU’s evolving regulatory landscape, from GDPR through the Data Governance Act to the EU AI Act, is accelerating the move toward local, high-performance data environments and hybrid infrastructures that balance sovereign control with cloud flexibility. Those infrastructure shifts, metro-edge data centres, AI-optimised storage and attention to data locality, are being promoted as necessary to meet both compliance and latency demands of modern AI workloads. [2][5]

That regulatory momentum also reframes data governance as an operational imperative. Experts advise treating governance as a continuous service: tracking lineage, monitoring quality, securing consent and auditing model behaviour for bias and drift. TechRadar Pro emphasises five governance pillars, quality, security, transparency, ethics and compliance, and warns that few organisations yet possess the maturity to apply them consistently to generative and agentic AI systems. Boards, the analysis argues, must regard governance as a strategic asset if firms are to scale trusted AI. [3]

The EU AI Act, due to come fully into force in August 2026, is sharpening the calculus. Reporting suggests that the regulation’s risk-based requirements for transparency, verification and human oversight make private or self-hosted AI implementations more attractive to firms with sensitive data. TechRadar Pro recommends private models and controlled deployments to meet obligations under the Act and to reduce exposure to reputational and financial penalties. For many businesses, selecting private AI and tighter data controls is as much about risk management as about competitive strategy. [4]

Yet Europe faces practical constraints in realising full-stack sovereignty. Soner admits that even vendors building for sovereignty rely on foundational technologies produced outside Europe. "There’s no way around it. Take databases , almost all major ones are US-based. So the question isn’t whether you use external technology, it’s how you use it. It’s about layering." That pragmatic layering, keeping sensitive flows in-house while utilising global compute or tooling selectively, is increasingly presented as the realistic path to meaningful data control. [1]

Translating sovereignty into customer value is critical if firms are to adopt less convenient or more costly architecture. Soner points to Apple as an example of making privacy a tangible feature: "They communicate clearly: your data stays on your device. That’s the right approach. It’s not about saying, “We’re a German company, we follow strict regulations.” Customers don’t care about that. They care about what’s in it for them." Framing data ownership in terms of user benefit, rather than compliance PR, is a recurring recommendation across industry writing. [1]

For startups and scale-ups the trade-offs can be acute: free, hosted tools accelerate development but may leak the data that would become a firm’s lasting advantage. Soner warns that without disciplined decisions around data flows firms risk outsourcing their long-term moat. "Your data is your only moat", he says, arguing that AI amplifies whatever quality of data a business possesses. Building data ownership into culture and architecture early can make control a default operational posture rather than an expensive retrofit. [1]

If Europe is to retain companies that build valuable datasets, policy and capital must align. Analysts argue that improving regional infrastructure, data centres, networking and electricity, must be matched by funding, incentives and ecosystem support to persuade founders to stay. TechRadar Pro highlights the rise of regional metro-edge facilities and hybrid models as part of a pragmatic European strategy that converts regulatory constraints into competitive leverage. The hope is that by combining governance-first design, sovereign storage and selective use of external compute, European firms can capture value from data without forgoing scale or innovation. [2][5]

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

- Paragraph 1: [2]

- Paragraph 2: [6]

- Paragraph 3: [1],[6]

- Paragraph 4: [2],[5]

- Paragraph 5: [3]

- Paragraph 6: [4]

- Paragraph 7: [1]

- Paragraph 8: [1],[6]

- Paragraph 9: [2],[5]

Source: Noah Wire Services