The UK's Information Commissioner's Office has issued new guidance emphasizing that organisations deploying agentic AI must uphold data transparency and accountability, even when using third-party systems, highlighting a shift towards stricter compliance for innovative AI applications.

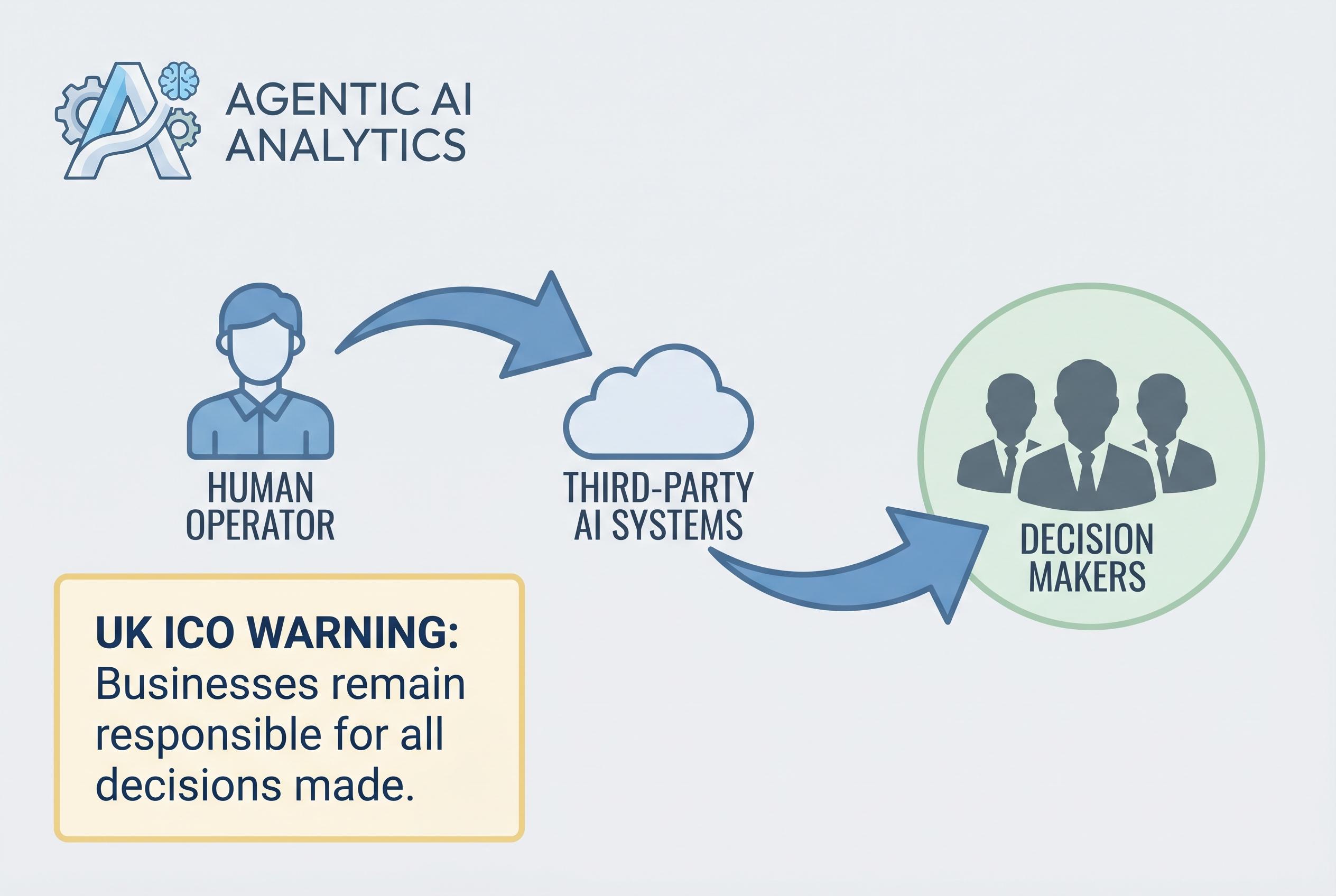

The Information Commissioner's Office has put UK startups on notice: agentic AI may be capable of acting at speed and scale, but that does not loosen the obligations around data protection, transparency or accountability. In draft guidance, the regulator set out what organisations must be able to show when autonomous systems process personal data on users' behalf, and the message is that responsibility stays with the business deploying the tool, even when the underlying agent comes from a third party. The ICO's intervention lands alongside a wider regulatory push, with the Competition and Markets Authority and the Digital Regulation Cooperation Forum also warning that existing rules already apply to these systems.

At the heart of the ICO's position is a simple idea with difficult consequences for founders: if an AI agent makes a decision, the organisation must be able to explain how that decision was reached, what information it used and why it was permitted to act. According to the ICO's updated AI and data protection guidance, fairness remains central, and the regulator says the framework is intended to support innovation while protecting individuals, including vulnerable users. The agency's earlier report on agentic AI also flagged accountability, transparency, data minimisation and purpose limitation as likely pressure points for businesses adopting these tools.

That focus on accountability becomes more complex when a product relies on several systems at once. Experts quoted by TechRound said one of the hardest issues is determining who controls what in a multi-agent environment, especially where an autonomous tool moves across platforms and services. They argued that startups need clearer data-flow mapping, stronger audit trails and documented decision-making from the outset, rather than trying to patch compliance on after launch. For sectors such as health, finance and HR, several specialists urged firms to fast-track data protection impact assessments before releasing the next version of a product.

The UK's approach remains less prescriptive than the EU's AI Act, but that flexibility comes with uncertainty. The ICO is not setting out a rigid risk-category system, instead leaning on principles that require judgment from founders and their advisers. That may suit teams with mature governance, but it can slow younger companies that lack in-house legal and compliance support. The CMA has separately warned that consumer law obligations must be built into products from the beginning, and that enforcement can extend to outcomes generated by AI agents.

Even so, some founders and consultants see the tightening of expectations as a competitive advantage rather than a brake. TechRound's contributors argued that businesses which build traceability, human review and clear boundaries into their systems early will be better placed for enterprise sales, investor scrutiny and expansion into other markets. The broad conclusion from the recent wave of UK guidance is that agentic AI is no longer being treated as a novelty; it is being folded into the country's existing legal and regulatory architecture, with startups expected to prove they can keep up.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

8

Notes:

The article was published on 10 April 2026, reporting on the UK's Information Commissioner's Office (ICO) draft guidance on agentic AI. The ICO's guidance on AI and data protection was last updated on 15 March 2023. ([cy.ico.org.uk](https://cy.ico.org.uk/for-organisations/uk-gdpr-guidance-and-resources/artificial-intelligence/guidance-on-ai-and-data-protection/?q=impact+assessment&utm_source=openai)) The article references recent developments, including the Competition and Markets Authority's research paper from 9 March 2026 and the Digital Regulation Cooperation Forum's foresight paper from 31 March 2026. The freshness score is high, but the reliance on a single source for the ICO's guidance raises concerns about the article's originality. The ICO's guidance is publicly available, so the article may be summarising existing information. The article does not provide direct quotes from the ICO's guidance, which could have enhanced its originality. The lack of direct quotes and the reliance on a single source suggest that the article may not be entirely original. The freshness score is reduced due to these concerns.

Quotes check

Score:

5

Notes:

The article does not include direct quotes from the ICO's guidance or other sources. This absence makes it difficult to verify the accuracy of the information presented. The lack of direct quotes raises concerns about the article's originality and the potential for misrepresentation. The absence of verifiable quotes significantly reduces the credibility of the article.

Source reliability

Score:

6

Notes:

The article is published on TechRound, a UK-based platform focusing on startup news and insights. While TechRound is a niche publication, it is not a major news organisation. The article references the ICO's guidance, which is a reputable source. However, the article does not provide direct links to the ICO's guidance or other primary sources, making it difficult to assess the accuracy of the information presented. The lack of direct links to primary sources raises concerns about the article's reliability.

Plausibility check

Score:

7

Notes:

The article discusses the ICO's draft guidance on agentic AI, which aligns with recent regulatory developments in the UK. The Competition and Markets Authority's research paper from 9 March 2026 and the Digital Regulation Cooperation Forum's foresight paper from 31 March 2026 support the plausibility of the article's claims. However, the article does not provide direct quotes or detailed references to these sources, making it difficult to fully verify the information presented. The lack of direct references to primary sources reduces the overall plausibility of the article.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article reports on the ICO's draft guidance on agentic AI, referencing recent regulatory developments. However, it lacks direct quotes and detailed references to primary sources, making it difficult to verify the accuracy of the information presented. The reliance on a single source and the absence of direct links to primary sources raise concerns about the article's originality and reliability. Given these issues, the article does not meet the necessary standards for publication.