The Internet Archive’s Wayback Machine is experiencing growing pushback from publishers who restrict access over fears its archived content is being exploited for AI training, threatening the integrity of historical digital records.

The Internet Archive’s Wayback Machine is facing an awkward consequence of the AI boom: the same public record it has spent decades preserving is increasingly being treated by publishers as a potential source of training data.

According to Nieman Lab, 241 news sites in nine countries now block at least one of the Internet Archive’s four crawling bots, with outlets including The New York Times and Reddit among them. The Guardian has taken a different approach, not blocking the crawlers outright but limiting what appears through the Archive’s interface and API, making archived versions harder for ordinary users to find and use.

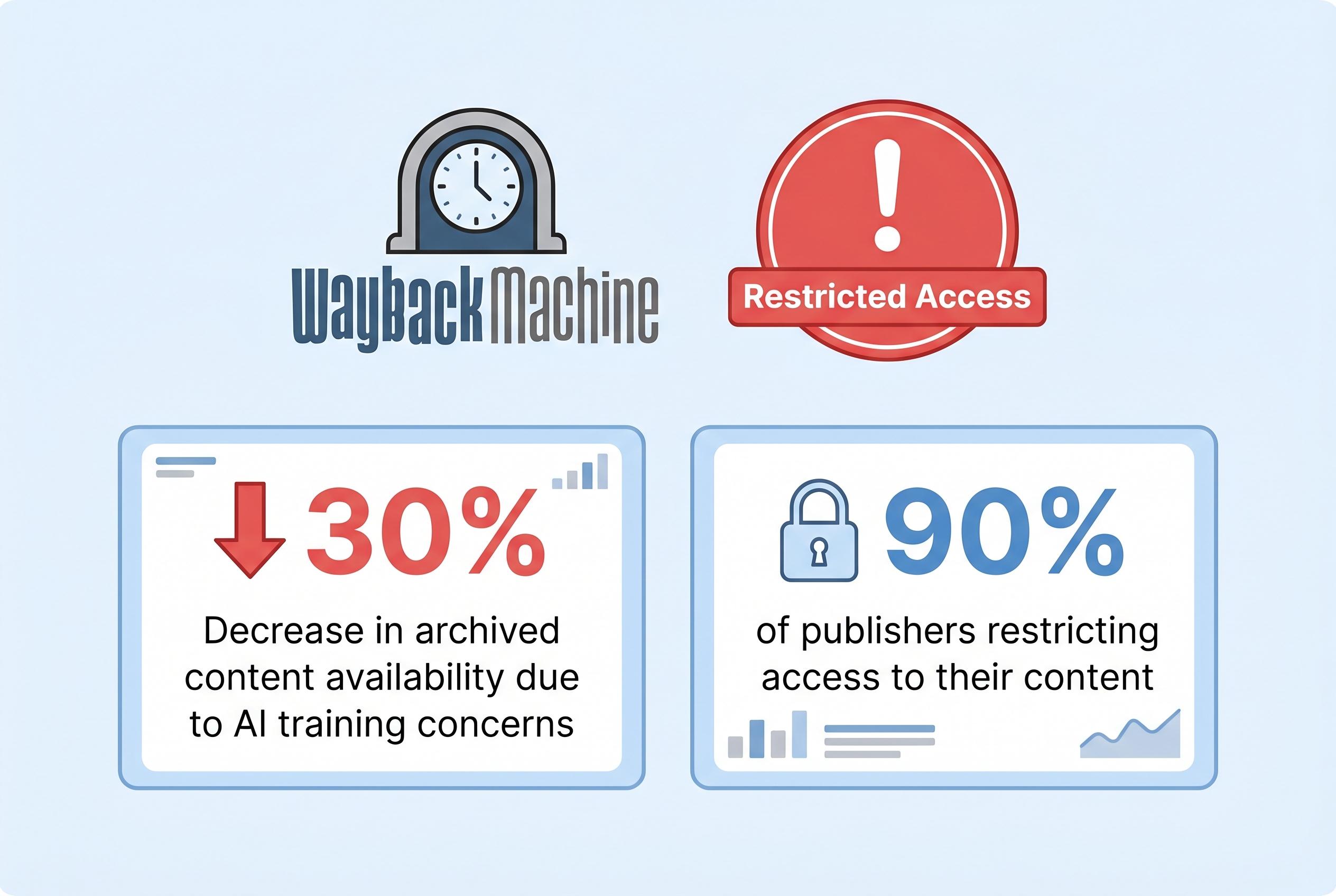

The shift reflects a broader fear that large language models are being trained on archived material without permission. Reported concerns over AI scraping have also helped drive similar restrictions at other sites, and a separate U.S. court ruling against Anna’s Archive has reinforced how aggressively copyright and anti-circumvention claims are now being tested in cases involving scraped digital material. Yet the Internet Archive says its systems are built for preservation and public access, not industrial-scale data harvesting.

Mark Graham, who directs the Wayback Machine, has argued that the archive already uses controls to curb abuse and block large-scale extraction. In comments reported by Nieman Lab and elsewhere, he and other defenders of the Archive have warned that punishing preservation tools for the behaviour of AI firms risks damaging journalism, research and historical accountability instead.

That concern is not abstract. The Internet Archive’s backers point out that many publishers rely on it when their own content disappears, changes or is removed entirely. If major news organisations continue to withdraw access, the result could be a thinner historical record of the web, with fewer traces of events, reporting and public debate available to researchers and the public.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

8

Notes:

The article was published on April 20, 2026, and discusses recent developments regarding media sites blocking the Internet Archive's Wayback Machine. Similar reports have appeared in the past week, indicating the topic is current. However, the article does not provide specific dates for the blocking actions, making it difficult to assess the exact timeline of events.

Quotes check

Score:

7

Notes:

The article includes direct quotes from Mark Graham, director of the Wayback Machine, and other sources. While these quotes are attributed, they are not accompanied by direct links to the original sources, making independent verification challenging. The absence of direct citations raises concerns about the accuracy and context of the quotes.

Source reliability

Score:

6

Notes:

The article is published by The Week, a reputable news outlet. However, the piece relies heavily on secondary sources and does not provide direct links to primary sources or official statements from the Internet Archive or the media organizations involved. This lack of direct sourcing diminishes the overall reliability of the information presented.

Plausibility check

Score:

8

Notes:

The claims about media sites blocking the Wayback Machine due to AI scraping concerns are plausible and align with recent reports from other reputable outlets. However, the article does not provide specific examples or detailed evidence to support these claims, which would strengthen the argument.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article discusses recent actions by media sites blocking the Internet Archive's Wayback Machine due to concerns over AI scraping. While the topic is current and plausible, the lack of direct citations, specific examples, and detailed evidence diminishes the overall reliability and verifiability of the information presented. The absence of primary sources and official statements raises concerns about the accuracy and context of the claims made.