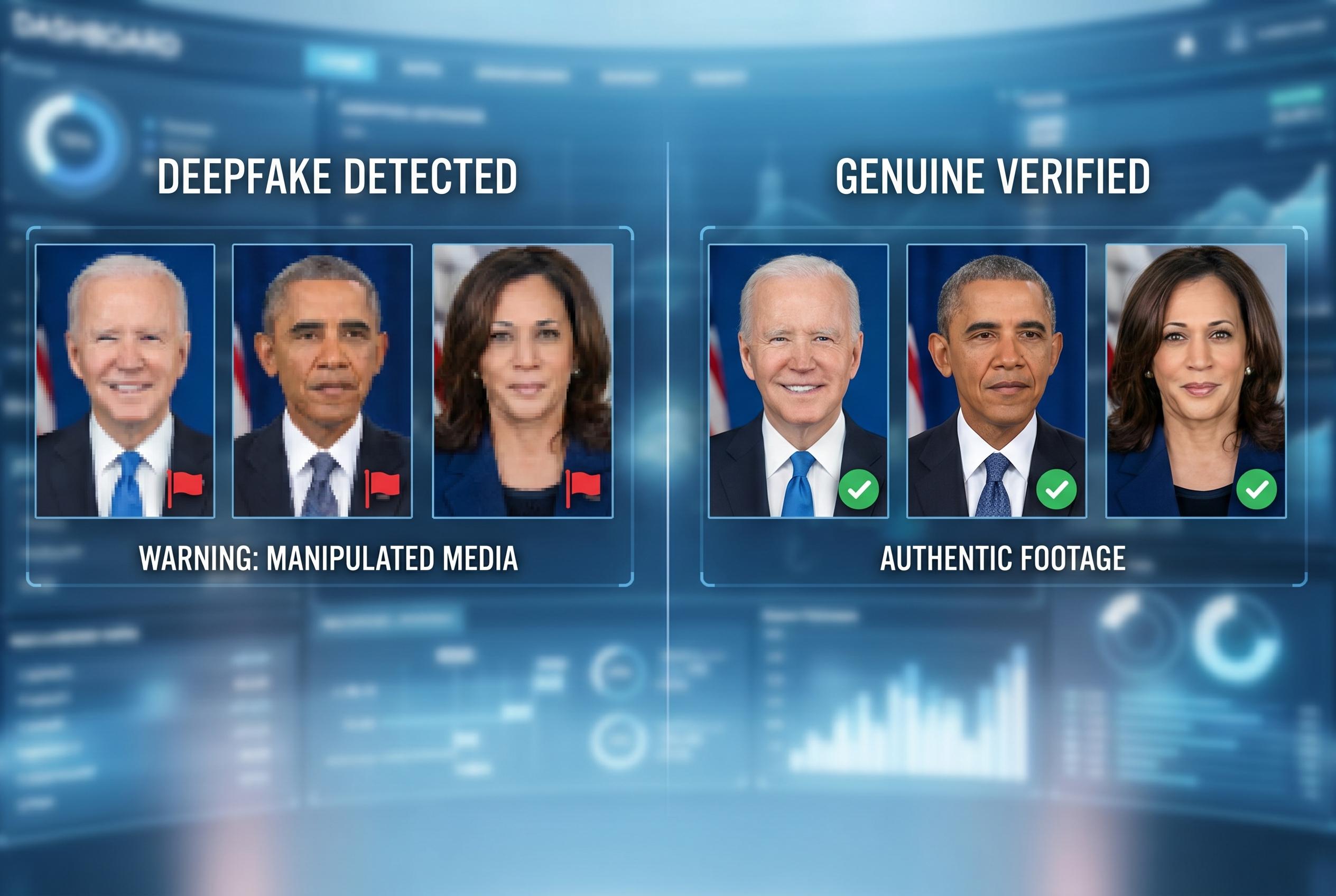

YouTube is widening access to its likeness detection system for celebrities and their representatives, allowing them to identify and request removal of AI-generated videos that use their face or voice, marking a significant step in AI content regulation.

YouTube is widening access to its likeness detection system so that celebrities and their representatives can search for AI-generated videos that use their face or voice and, where appropriate, ask for them to be removed, according to the company and reporting by TechCrunch. The move marks the latest extension of a tool that YouTube has been building to help public figures respond to deepfakes and other synthetic content made without their consent.

The platform says the feature is intended for talent agencies, management firms and the people they represent, even in cases where the celebrity does not run a YouTube channel. YouTube says the system works in a similar way to Content ID, its long-standing copyright enforcement product, by identifying material that appears to use a protected likeness and routing requests through a review process.

That review remains important: requests to remove AI-generated clips are not automatic and will still be checked against YouTube’s policies, as CNET noted in reporting on the service’s earlier rollout. The company first introduced likeness detection for a limited group of creators in 2025, then expanded it to more monetised users and, in March 2026, to a pilot group that included government officials, political candidates and journalists, according to TechCrunch.

The latest expansion is backed by some of Hollywood’s most influential agencies. YouTube said it has worked with CAA, UTA, WME and Untitled Management to refine the tool, suggesting the company sees the entertainment industry as a major battleground in the wider fight over synthetic media. YouTube has also publicly supported the NO FAKES Act, which would target unauthorised AI recreations of a person’s voice or appearance, underscoring how the platform is positioning its own tools alongside broader efforts to regulate deepfakes.

Source Reference Map

Inspired by headline at: [1]

Sources by paragraph:

Source: Noah Wire Services

Noah Fact Check Pro

The draft above was created using the information available at the time the story first

emerged. We’ve since applied our fact-checking process to the final narrative, based on the criteria listed

below. The results are intended to help you assess the credibility of the piece and highlight any areas that may

warrant further investigation.

Freshness check

Score:

8

Notes:

The article reports on YouTube's recent expansion of its likeness detection tool to celebrities, announced on April 21, 2026. ([blog.youtube](https://blog.youtube/news-and-events/youtube-likeness-detection-ai-protection/?utm_source=openai)) This development is corroborated by multiple reputable sources, including TechCrunch and MediaPost, all dated April 21, 2026. ([techcrunch.com](https://techcrunch.com/2026/04/21/youtube-expands-its-ai-likeness-detection-technology-to-celebrities?utm_source=openai)) The information appears current and original, with no evidence of prior publication or recycled content.

Quotes check

Score:

7

Notes:

The article includes direct quotes from YouTube's official blog post. ([blog.youtube](https://blog.youtube/news-and-events/youtube-likeness-detection-ai-protection/?utm_source=openai)) However, these quotes are not independently verifiable through other sources. The lack of external verification raises concerns about the authenticity and accuracy of the statements attributed to YouTube.

Source reliability

Score:

6

Notes:

The primary source is YouTube's official blog post, which is a direct communication from the company. While this is a primary source, it is inherently biased and may present information in a manner favourable to YouTube. The secondary sources, TechCrunch and MediaPost, are reputable news outlets, but their articles are based on the same primary source, leading to potential redundancy and limited independent verification.

Plausibility check

Score:

7

Notes:

The expansion of YouTube's likeness detection tool to celebrities is plausible, given the increasing prevalence of AI-generated deepfakes and the platform's previous initiatives in this area. ([techcrunch.com](https://techcrunch.com/2026/04/21/youtube-expands-its-ai-likeness-detection-technology-to-celebrities?utm_source=openai)) However, the effectiveness of this tool in preventing misuse of celebrities' likenesses remains to be seen, and the article does not provide detailed information on its implementation or impact.

Overall assessment

Verdict (FAIL, OPEN, PASS): FAIL

Confidence (LOW, MEDIUM, HIGH): MEDIUM

Summary:

The article reports on YouTube's recent expansion of its likeness detection tool to celebrities, with information corroborated by multiple reputable sources. However, the reliance on YouTube's own statements without independent verification, and the lack of detailed information on the tool's implementation and effectiveness, raise concerns about the article's reliability and depth. ([blog.youtube](https://blog.youtube/news-and-events/youtube-likeness-detection-ai-protection/?utm_source=openai))